This comes with a promise of up to 25 times reduced cost and energy consumption compared to its predecessor.

“For three decades we’ve pursued accelerated computing, with the goal of enabling transformative breakthroughs like deep learning and AI,” said Jensen Huang, Founder, and CEO of NVIDIA. “Generative AI is the defining technology of our time. Blackwell is the engine to power this new industrial revolution. Working with the most dynamic companies in the world, we will realise the promise of AI for every industry.”

The Blackwell GPU architecture introduces six technologies aimed at accelerating computing. These advancements promise significant enhancements in data processing, engineering simulation, electronic design automation, computer-aided drug design, quantum computing, and generative AI, opening new industry opportunities for NVIDIA.

Expected adopters of the Blackwell platform include major organisations such as Amazon Web Services, Dell Technologies, Google, Meta, Microsoft, OpenAI, Oracle, Tesla, and xAI.

Named to honour David Harold Blackwell, a mathematician renowned for his work in game theory and statistics and the first African American inducted into the National Academy of Sciences, Blackwell succeeds the NVIDIA Hopper architecture introduced two years prior.

Blackwell innovations boosting accelerated computing and generative AI

Blackwell boasts six innovative technologies that collectively facilitate AI training and real-time large language model (LLM) inference for models up to 10 trillion parameters. These include:

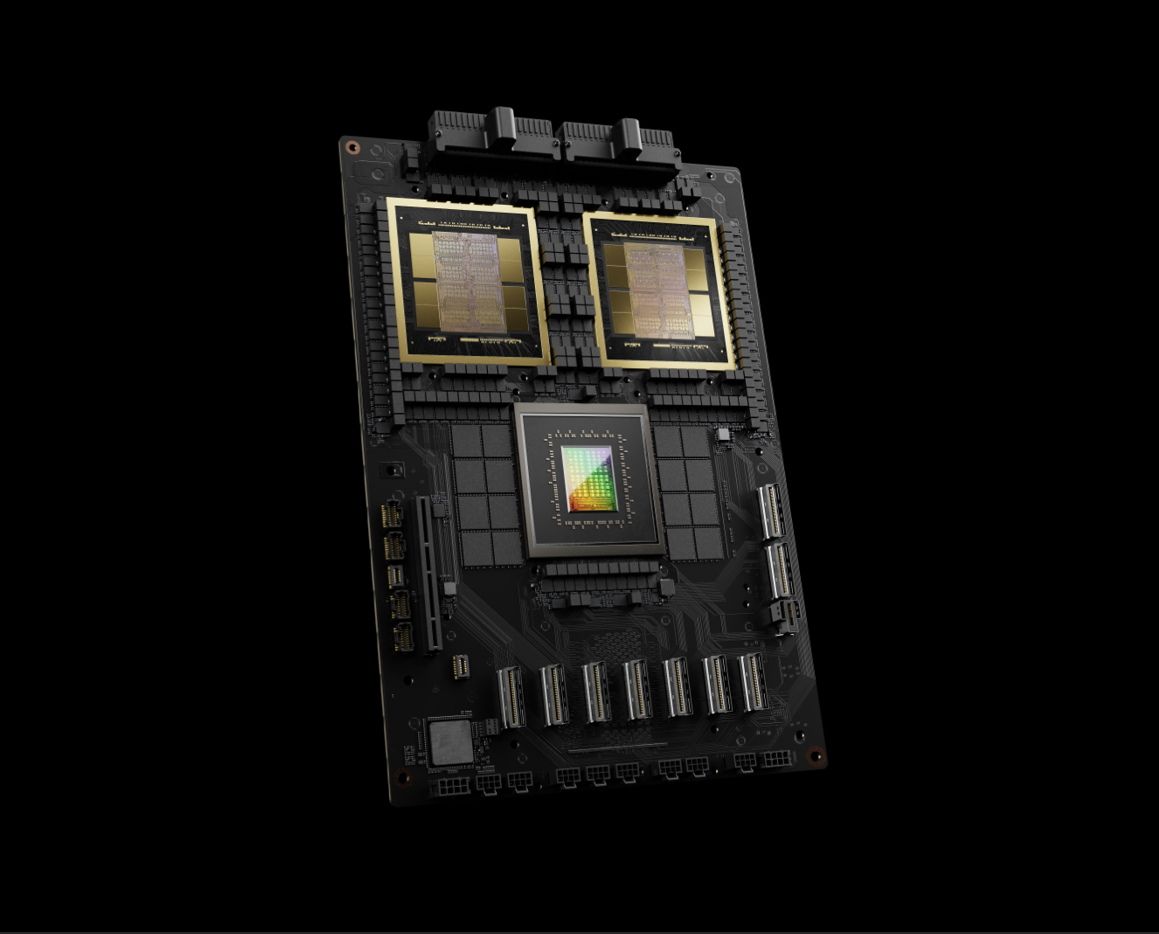

- The world’s most potent chip, featuring 208 billion transistors. It utilises a custom 4NP TSMC process with two-reticle limit GPU dies connected by a 10TB/second chip-to-chip link, forming a unified GPU.

- A second-generation Transformer Engine, supported by micro-tensor scaling and NVIDIA’s advanced algorithms, doubles the compute and model sizes with 4-bit floating point AI inference capabilities.

- A fifth-generation NVLink, offering 1.8TB/s bidirectional throughput per GPU, enhances performance for complex LLMs, supporting seamless communication among up to 576 GPUs.

- A RAS Engine in Blackwell GPUs provides reliability, availability, and serviceability, including AI-based preventative maintenance for diagnostics and forecasting reliability issues, thus improving system uptime, and reducing operational costs.

- Secure AI features advanced confidential computing capabilities, ensuring AI models and customer data protection without compromising performance.

- A dedicated decompression engine accelerates database queries, supporting the most efficient data analytics and science, foreseeing a GPU-accelerated future for data processing.

A colossal superchip

The NVIDIA GB200 Grace Blackwell Superchip, a cornerstone of NVIDIA’s offering, connects two B200 Tensor Core GPUs to the NVIDIA Grace CPU via a 900GB/s NVLink. Paired with NVIDIA Quantum-X800 InfiniBand and Spectrum-X800 Ethernet platforms, it represents the pinnacle of AI performance.

This superchip is integral to the NVIDIA GB200 NVL72, a liquid-cooled, rack-scale system designed for demanding workloads, offering up to 30 times the performance increase over the NVIDIA H100 Tensor Core GPUs for LLM inference tasks while slashing cost and energy use by up to 25 times.

Global network of Blackwell partners

Blackwell-based products will be accessible through partners later this year, with Cloud service providers like AWS, Google Cloud, Microsoft Azure, and Oracle Cloud Infrastructure among the first to offer Blackwell-powered instances. Additionally, NVIDIA Cloud Partner program companies and various sovereign AI Clouds will provide Blackwell-based services.

NVIDIA’s software ecosystem, including NVIDIA AI Enterprise, supports the Blackwell portfolio, offering a comprehensive operating system for AI, complete with inference microservices, frameworks, libraries, and tools for deployment across NVIDIA-accelerated clouds, data centres, and workstations.

Sundar Pichai, CEO of Alphabet and Google: “Scaling services like Search and Gmail to billions of users has taught us a lot about managing compute infrastructure. As we enter the AI platform shift, we continue to invest deeply in infrastructure for our own products and services, and for our Cloud customers. We are fortunate to have a longstanding partnership with NVIDIA and look forward to bringing the breakthrough capabilities of the Blackwell GPU to our Cloud customers and teams across Google, including Google DeepMind, to accelerate future discoveries.”

Andy Jassy, President, and CEO of Amazon: “Our deep collaboration with NVIDIA goes back more than 13 years, when we launched the world’s first GPU Cloud instance on AWS. Today we offer the widest range of GPU solutions available anywhere in the Cloud, supporting the world’s most technologically advanced accelerated workloads. It’s why the new NVIDIA Blackwell GPU will run so well on AWS and the reason that NVIDIA chose AWS to co-develop Project Ceiba, combining NVIDIA’s next-generation Grace Blackwell Superchips with the AWS Nitro System’s advanced virtualisation and ultra-fast Elastic Fabric Adapter networking, for NVIDIA’s own AI research and development. Through this joint effort between AWS and NVIDIA engineers, we’re continuing to innovate together to make AWS the best place for anyone to run NVIDIA GPUs in the Cloud.”

Michael Dell, Founder, and CEO of Dell Technologies: “Generative AI is critical to creating smarter, more reliable and efficient systems. Dell Technologies and NVIDIA are working together to shape the future of technology. With the launch of Blackwell, we will continue to deliver the next-generation of accelerated products and services to our customers, providing them with the tools they need to drive innovation across industries.”

Demis Hassabis, Co-Founder, and CEO of Google DeepMind: “The transformative potential of AI is incredible, and it will help us solve some of the world’s most important scientific problems. Blackwell’s breakthrough technological capabilities will provide the critical compute needed to help the world’s brightest minds chart new scientific discoveries.”

Mark Zuckerberg, Founder, and CEO of Meta: “AI already powers everything from our large language models to our content recommendations, ads, and safety systems, and it’s only going to get more important in the future. We’re looking forward to using NVIDIA’s Blackwell to help train our open-source Llama models and build the next generation of Meta AI and consumer products.”

Satya Nadella, Executive Chairman and CEO of Microsoft: “We are committed to offering our customers the most advanced infrastructure to power their AI workloads. By bringing the GB200 Grace Blackwell processor to our data centres globally, we are building on our long-standing history of optimising NVIDIA GPUs for our Cloud, as we make the promise of AI real for organisations everywhere.”

Sam Altman, CEO of OpenAI: “Blackwell offers massive performance leaps and will accelerate our ability to deliver leading-edge models. We’re excited to continue working with NVIDIA to enhance AI compute.”

Larry Ellison, Chairman and CTO of Oracle: “Oracle’s close collaboration with NVIDIA will enable qualitative and quantitative breakthroughs in AI, machine learning and data analytics. In order for customers to uncover more actionable insights, an even more powerful engine like Blackwell is needed, which is purpose-built for accelerated computing and generative AI.”

Elon Musk, CEO of Tesla and xAI: “There is currently nothing better than NVIDIA hardware for AI.”