Designing secure electronic software

In all kinds of software, the development stage is where the majority of future vulnerabilities originate, and can ultimately lead to performance issues, or be the ingress point for malicious attacks. The consequences of not dealing with these vulnerabilities can be substantial, especially in safety-critical products involving embedded software. Steve Howard of Perforce Software explains.

For instance, buffer overflows are a common issue and are typically caused when a program writes more data to a buffer than it can hold, thereby overwriting memory it should not be able to access. The result can be corrupted data, causing a program to have operability problems, crash, or even be exploited by a hacker, who could take control of processes that have super-user status and then infiltrate a machine, and even gain access to other network-connected resources.

Buffer overflows are only one of many examples, so it is vital to take a ‘security first’ approach to embedded software design. Furthermore, compliance and regulation requirements often mandate security processes within software development.

However, building embedded software that’s bulletproof, as far as security risks are concerned, can be challenging and time-consuming - a single flaw suffices to undermine the reliability or security of an entire system and the sheer volumes of code to process make this an uphill struggle. Fortunately, as well as tools to aid developers, there is a wealth of easily accessible information available that represents the culmination of knowledge from a variety of sources.

The common weakness enumeration

The Common Weakness Enumeration is more usually referred as the CWE, and is a free list of the most typical vulnerabilities (including a top 25), all of which is gathered together by the CWE community. Think of the CWE as a type of reference book of types of security vulnerabilities, covering both hardware and software.

CWE entries related to software are generally programming language agnostic, although examples are often given for more widely used languages, such as C, C++ (both of which are widely used in embedded software development), Java and C#. Sponsored by the MITRE Corporation, the community comprises representatives from major operating systems vendors, commercial information security tool vendors, academia, government agencies and research institutions. The full CWE list is updated every few months, and includes over 600 categories of software vulnerabilities.

Common vulnerabilities and exposure

Usually abbreviated to CVE, the Common Vulnerabilities and Exposures list covers publicly-known cyber security vulnerabilities and risks. Rather than being generic, these are specific instances of issues that are reported publicly and relate to specific software products or codebases, and versions.

CVE Identifiers are created for each software vulnerability and are used as a standard method for cross-linking with other repositories. Each identifier includes a number, an indication of status, a brief description and any pertinent references.

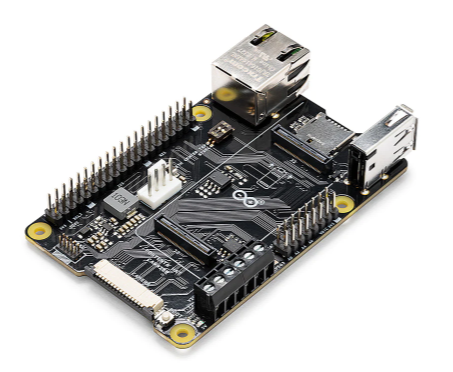

Above: There are a variety of industry resources, tools and techniques to help prevent software vulnerabilities occurring or escaping into production

Some of the software vulnerabilities to be found on the CVE list include: buffer overflow; code execution; cross-site scripting (XSS); Denial of Service (DOS); directory traversal; HTTP response splitting; memory corruption; and SQL injection. And these types of defects and their manifestations then influence and update the CWE lists.

The difference between CVE and CVSS is this: CVE is a list of vulnerabilities, while CVSS is the overall score assigned to a particular vulnerability. They work together to help prioritise software vulnerabilities.

Closely synchronised with the CVE is the National Vulnerability Database (NVD). While this is the US Government’s repository of standards-based vulnerability management data, it is still relevant to organisations elsewhere in the world.

Common Vulnerability Scoring System (CVSS)

The CVSS is an open industry standard for assessing the severity of software vulnerabilities, with each one having a score rating from 0.0 (the lowest) up to 10.0 (the highest). The aim is to help companies prioritise which vulnerabilities need the most attention. For instance, 0.0 indicates no risk, 4.0-6.9 is medium risk, and above 9.0 indicates critical risk. CVSS metrics fall under three main categories: base, temporal, and environmental metrics.

Base metrics are divided into exploitability and impact. Exploitability metrics refers to the characteristics of the software that make it at risk, such as how easy or difficult it is to exploit the vulnerability, how many times an attacker must authenticate a target to exploit it, or the level of privileges an attacker must achieve before being able to successfully carry out malicious actions. Impact metrics address the worst-case scenario, for instance, the confidentiality of data; the integrity of the exploited system; and its availability.

Temporal and environmental metrics

The value of temporal metrics within the CVSS change over time, as exploitations are developed, discovered, and solved (whether completely or partially). These metrics include the current state of exploitability, and the amount of mitigation and official fixes available. They also cover the level of confidence in the existence of the vulnerability and credibility of the associated technical details.

The third category, environmental metrics, combine the scores from both base and temporal metrics to assess as accurately as possible the severity of a vulnerability to the software being developed. For instance, ‘Collateral Damage Potential’ measures the potential loss or impact on either physical assets (such as hardware), or the financial impact, were the vulnerability to be exploited.

Other sources

While the CWE, CVE and CVSS are free resources, there are others that require some investment, but can have huge benefits for reducing the risks surrounding software vulnerabilities. These include industry standards, of which an example is IEC 62433, which is a set of international security standards used to defend industrial networks against cyber security threats. Another standard currently under development and expected for release during 2021 is ISO/IEC 21434, which will focus on cyber security risks of electronic systems in road vehicles.

Coding standards are another resource, and aim to give software engineers a set of ‘rules’ or guidelines against which to develop their code with confidence. As well as supporting the creation of more secure code, their use may also be mandated as part of adherence to compliance. For instance, while the automotive industry standard ISO 26262 does not specify a named coding standard, it requires the use of one (typically MISRA or AUTOSAR are used in that industry).

A coding standard widely used across multiple industries is CERT, a secure coding standard that supports commonly used software programming languages, such as C, C++ and Java. Each guideline within the standard includes a risk assessment element, to help determine the possible consequences of violating that specific rule or recommendation. CERT is governed by the CERT Division at the Software Engineering Institute, part of the Carnegie Mellon University in the US.

Fixing vulnerabilities

To find the vulnerabilities or to meet the requirements of all these sources, an increasing number of organisations in the electronics industry use static application security testing, which is a methodology designed for inspecting and analysing application source code to uncover security vulnerabilities. Also known as ‘white box testing’, Static Application Security Testing (SAST) tools scan an application’s code throughout the development process and provides in-depth analysis to identify defects, vulnerabilities and compliance issues. Think of SAST as the first line of defence, pointing out to developers exactly where a problem lies.

SAST can catch security issues such as SQL injections, which could disrupt the availability and integrity of an application’s service. It can also be used to make improvements to code before it is released, helping to reduce the cost of later diagnostics and remedial actions. Importantly, SAST notifies developers about faulty code, rather than them having to manually check that code.

Successful software testing

There are a few steps towards achieving successful SAST in practice. First, choose a SAST tool (typically a static code analyser) that is compatible with the languages in which applications are written. Next, prepare the scanning environment, by setting access controls and authorisation mechanisms, and procuring all the resources needed to deploy the SAST tools, such as databases and servers.

Make sure to choose a SAST tool that can integrate into the existing software build environment, and implement dashboards where scan results can be tracked and reports generated. Consider customisation, such as writing new rules to help detect additional security vulnerabilities. Once the SAST environment has been set up, onboard applications, it will prioritise those with the highest associated risks, although it is essential to scan all applications regularly. After each code scan, remove false positives from the results, then pass the edited set of results to colleagues or other teams for any remediation required.

Securing software in embedded software and the electronics industry in general is a multi-faceted, ever-evolving target, but making the most of some of the expert resources available – such as the CERT coding standard – together with best practice prevention techniques – such as SAST tools – will go a long way to mitigating many of the risks, particularly those that stem from the development process.