This technology, detailed in the journal Nature Communications, holds the promise of restoring communication abilities to individuals with severe neurological disorders.

Gregory Cogan, Ph.D., a professor of neurology at Duke University’s School of Medicine and a lead researcher on the project, highlighted the potential impact of this technology: “There are many patients who suffer from debilitating motor disorders, like ALS or locked-in syndrome, that can impair their ability to speak. But the current tools available to allow them to communicate are generally very slow and cumbersome.”

Current speech decoding technologies lag behind natural speech rates, operating at about 78 words per minute compared to the average human speech rate of approximately 150 words per minute. This significant gap is due, in part, to limitations in brain activity sensors, which have historically been few in number and unable to provide sufficiently detailed information.

(Photo by Dan Vahaba/Duke University)

(Photo by Dan Vahaba/Duke University)

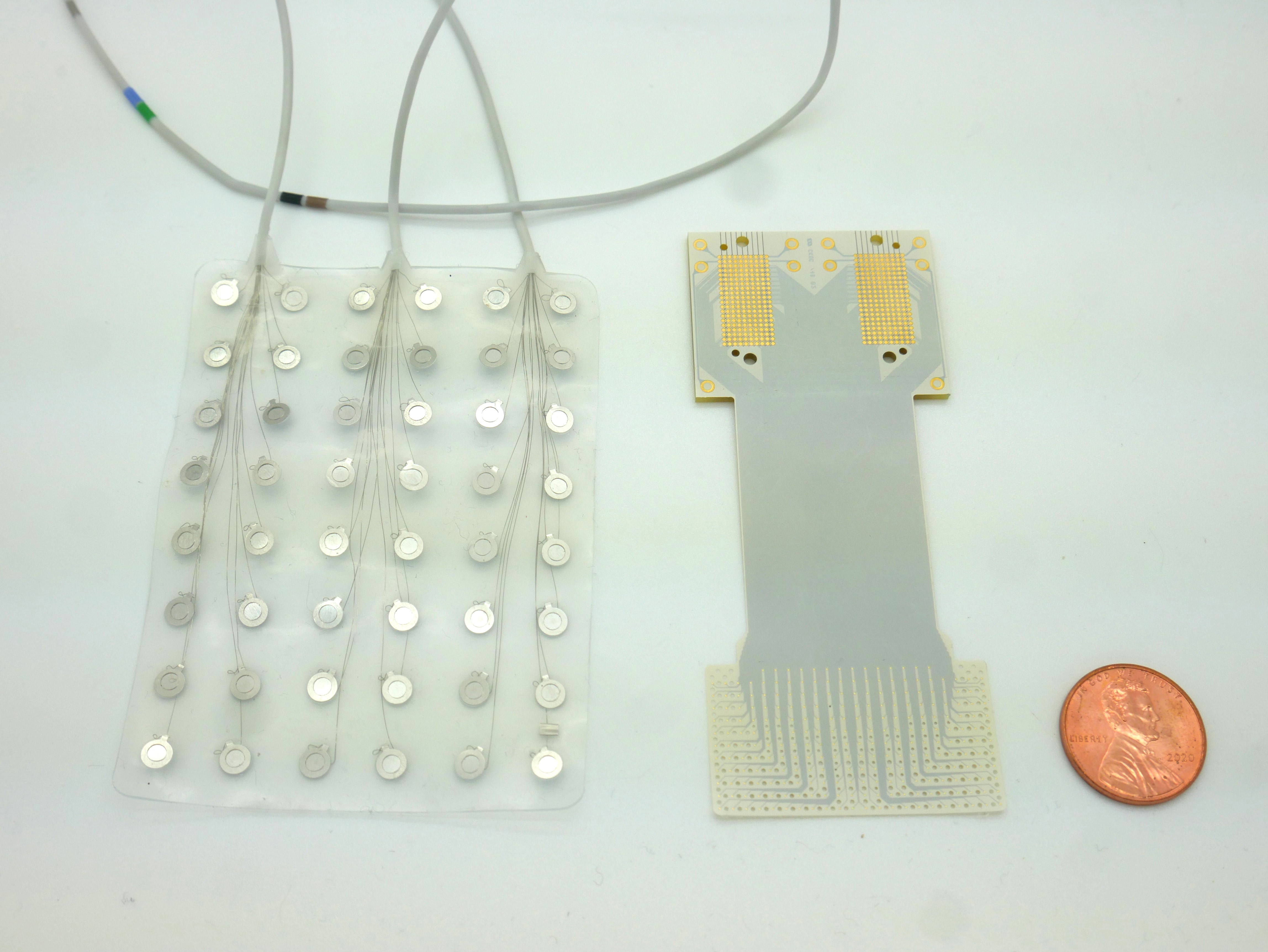

To overcome these limitations, Cogan collaborated with Jonathan Viventi, Ph.D., whose lab at the Duke Institute for Brain Sciences specialises in high-density, ultra-thin, and flexible brain sensors. Viventi’s team succeeded in embedding 256 microscopic sensors onto a small, medical-grade plastic piece, enabling the distinction of signals from neighbouring brain cells involved in speech coordination.

Greg Cogan described the urgency and precision of their work in the operating room, akin to a NASCAR pit crew: “We don’t want to add any extra time to the operating procedure, so we had to be in and out within 15 minutes. As soon as the surgeon and the medical team said ‘Go!’ we rushed into action and the patient performed the task.”

The team’s innovation was tested on patients undergoing brain surgery for other conditions, such as treating Parkinson’s disease or tumour removal. The task involved a listen-and-repeat activity with nonsense words, allowing the device to record activity from the speech motor cortex.

Suseendrakumar Duraivel, the first author of the report and a biomedical engineering graduate student at Duke, fed the neural and speech data into a machine learning algorithm. The decoder showed varying levels of accuracy, with a notable 84% success rate for certain sounds, dropping when parsing sounds in the middle or end of words or when sounds were similar.

Despite the challenges, the decoder’s 40% accuracy rate is a promising start, especially considering it worked with only 90 seconds of spoken data. The team, buoyed by a recent $2.4m grant from the National Institutes of Health, is now developing a cordless version of the device.

Compared to current speech prosthetics with 128 electrodes (left), Duke engineers have developed a new device that accommodates twice as many sensors in a significantly smaller footprint (photo by Dan Vahaba/Duke University)

Compared to current speech prosthetics with 128 electrodes (left), Duke engineers have developed a new device that accommodates twice as many sensors in a significantly smaller footprint (photo by Dan Vahaba/Duke University)

“We’re now developing the same kind of recording devices, but without any wires,” Cogan shared with excitement. “You’d be able to move around, and you wouldn’t have to be tied to an electrical outlet, which is really exciting.”

While the technology is still in its nascent stages, its potential is undeniable. Jonathan Viventi acknowledges the current speed limitations but remains optimistic about future prospects: “We’re at the point where it’s still much slower than natural speech,” he said. “But you can see the trajectory where you might be able to get there.”

This promising development opens a new frontier in assistive technology, offering hope and a voice to those who have long been without.