Intra-vehicle connectivity requires an in-vehicle gateway that interconnects multiple Electronic Control Units (ECUs) in the system with various networking protocols. Ethernet is becoming a critical part of the vehicle network with increasing needs for higher bandwidth. Extra-vehicle connectivity with upcoming 5G network enables flexible distribution of workloads between the in-vehicle computers and edge computers and extends the role of the vehicle as a compute node in a bigger cloud environment. This enables various connectivity use cases, from very pragmatic software over the air (OTA) updates to complex Service Oriented Architecture (SOA) for autonomous driving.

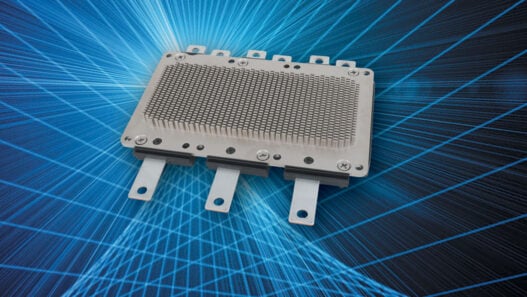

HPC gateways

Automation of many parts of the vehicle makes driving safer and more convenient. As the simple driver assistant systems evolves into more complex autonomous driving systems, the need for higher performance computing increases to process more incoming data as well as collaborating ECUs across in-vehicle network. There are many different ways of consolidating and distributing workloads, including those with mixed safety and security workloads, between multiple ECUs. Having a high-performance computing (HPC) gateway enables flexible system deployment and upgradability with proper SOA approach. With central access to a connected vehicle’s data, an HPC gateway can help unlock the value of this data.

Electrification is the result of environmental and regulatory pressure to reduce carbon dioxide emissions by replacing internal combustion engine with electric powertrain. At the same time, this is a catalyst for demand for better connectivity and more automation. As there are more ECUs needed for electric powertrain, the connectivity between the ECUs and to the cloud becomes important for battery and range management, data analytics and over the air feature update/upgrade.

Software architecture for HPC gateway

Service Oriented Architecture (SOA) sounds like an abstract idea but this concept is becoming pragmatic more than ever thanks to the industry effort around AUTOSAR Adaptive Platform. AUTOSAR (AUTomotive Open System ARchitecture) is a worldwide development partnership of vehicle manufacturers, suppliers, service providers and companies from the automotive electronics, semiconductor and software industry.

Since its inception in 2003, the AUTOSAR partnership has successfully led the standardised software architecture for deeply embedded ECUs based on AUTOSAR Classical Platform. With the rapid advance of Advanced Driver Assistance System (ADAS) and autonomous driving hardware and software, the partnership has defined a new standard of AUTOSAR Adaptive Platform based on modern computer science such as POSIX API, flexible application lifecycle and execution management as well as SOA.

With a SOA, services (units of logic) can be found on-board or off-board with the common IPC API at the application level. This provides an abstraction from the heterogeneous hardware and software environment, allowing the developers flexible distribution and consolidation of workload within the vehicle ECU network or even outside of the vehicle with the advance of low latency edge computing.

The foundation of SOA is the communication protocol. AUTOSAR has defined 2 protocol bindings as part of the standard: SOME/IP and DDS. Both protocols would typically run on a UDP/TCP/IP stack to handle the bandwidth required for a modern ADAS and Autonomous Driving System. The reliability and performance of the underlying network stack will have critical impact to the overall system stability.

Table 1 shows VxWorks’ network throughput on a NXP LS1043A-RBD board with a gigabit Ethernet interface. A few things are worth noting:

- For average packet sizes, throughput is at or near line-rate.

- In many instances, the performance is better than that of Linux.

- Throughput numbers for the 1 core configuration are essentially the same as with the 4 cores configuration. What this tells us is that if the CPU was maxed out in the single core configuration, which is not the case, one would have 3 cores to do compute and other things when 4 cores are enabled.

- All measurements are done using iperf3.

Network stack performance is a good indicator of a real world use case considering the complexity of the TCP/IP software stack with the number of processes/tasks involved, system calls to be handled and memory buffers with complex event and ownership synchronisation to be exchanged. Table 1 – Network throughput for NXP LS1043a-RBD

Based on the solid foundation of the Adaptive AUTOSAR middleware and performant networking stack, application software can be developed and deployed in the most flexible manner for HPC gateways. For example, the initial development may use the services provided by external ECUs for sensor fusion, but as the hardware design stabilises and application scenarios mature, the sensor fusion service can be brought into the HPG gateway itself without radical change of the rest of the applications, assuming the software was designed based on services discovery protocol provided by Adaptive AUTOSAR standard.

Multi-OS and mixed criticality applications

HPC gateways may be able to host various types of applications with different level of safety and security. Some of the fundamental gateway functions include translation of protocols and routing of data between different types of vehicle networks. However, as the computing power of the CPU increases and hardware based packet processing releases more CPU bandwidth for additional tasks, a stronger partitioning technology can help more robust system design.

For example, an algorithm developed with a machine learning approach may have been prototyped and validated on Linux environment. Instead of porting the entire application onto a different environment, it can be used, as-is, as a “Linux guest” sand-boxed by the hypervisor. Depending on the configuration, this can provide an identical execution environment for the AI (Artificial Intelligence) applications, minimising the effort of porting and validation of the applications developed in the lab environment.

The other scenario is enhanced security with stronger partitioning. A Linux or VxWorks guest OS can be given an exclusive access to the Ethernet controller or modem to the outside world with enhanced security stack of its own, but there can be an external health and/or sanity monitoring outside of the guest OS which can provide extra layer of intrusion detection and damage management including resetting of the guest OS playing the role of firewall.

In either case, there can be a safety OS for the workloads with highest level of criticality completely separated from the rest of the partitions, providing freedom of interference based on robust partitioning of Wind River Helix Virtualisation Platform.

Conclusion

Developing a HPC gateway in a heterogeneous hardware and software environment can be a daunting task. Ever increasing computing power of the modern SoC combined with the complexity from state of the art AI technology, and varying industry opinions on optimal functional safety architecture only make things more complicated.

Service Oriented Architecture based on the Adaptive AUTOSAR standard provides the flexibility of workload management with the strong industry support. Helix Virtualisation Platform offers more design choices with robust partitioning technology for the pragmatic functional safety architecture and added layer of protection for cyber security.