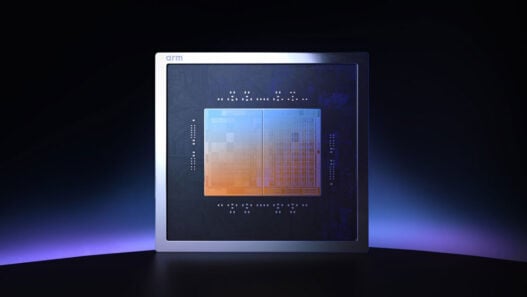

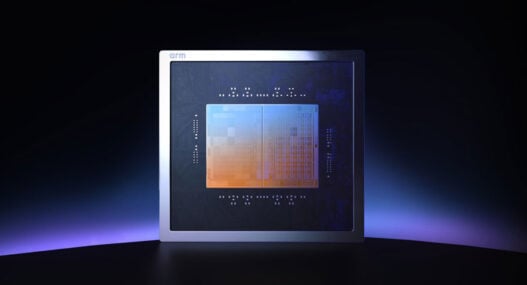

For the first time in its storied history, Arm will move into making its own semiconductor devices.

This seismic shift begins with the launch of the Arm AGI CPU, an Arm-designed CPU for AI data centres, built to address a rising class of agentic AI workloads.

“AI has fundamentally redefined how computing is built and deployed. Agentic computing is accelerating that change,” said Rene Haas, CEO, Arm. “Today marks the next phase of the Arm compute platform and a defining moment for our company. With the expansion into delivering production silicon with our Arm AGI CPU, we are giving partners more choices all built on Arm’s foundation of high-performance, power-efficient computing, to support agentic AI infrastructure at global scale.”

Arm anticipates the AGI CPU will be the foundation for agentic data centres. The expansion into silicon products provides the ecosystem with greater flexibility in how they build and deploy Arm-based infrastructure – whether licensing Arm IP, adopting Arm CSS, or deploying Arm-designed silicon.

The Arm AGI CPU delivers:

- Performance: up to 136 Arm Neoverse V3 cores per CPU, delivering leading performance per core, SoC, blade and rack, with 6GB/s memory bandwidth per core at sub-100ns latency

- Scale: 300W TDP with a dedicated core per program thread enables deterministic performance under sustained load, eliminating throttling and idle threads

- Efficiency: supports high-density 1U server chassis that supports air-cooled deployments with up to 8,160 cores per rack, and liquid-cooled systems delivering 45,000+ cores per rack

These capabilities translate into greater workload density, improved accelerator utilisation and more usable compute within existing power envelopes – critical advantages as AI infrastructure scales. The Arm AGI CPU delivers more than 2x performance per rack versus x86 CPUs, enabling up to $10 billion in CAPEX savings per GW of AI data centre capacity.

The first customer, Meta has stepped up to the plate as the lead developer and co-developer, leveraging Arm AGI CPU to optimise infrastructure for its family of apps and working alongside Meta’s own custom silicon, called Meta Training and Inference Accelerator (MTIA), enabling more efficient orchestration in large-scale AI systems.

Arm and Meta are committed to collaborating across multiple generations of the Arm AGI CPU roadmap.

“Delivering AI experiences at global scale demands a robust and adaptable portfolio of custom silicon solutions, purpose-built to accelerate AI workloads and optimise performance across Meta’s platforms,” said Santosh Janardhan, Head of Infrastructure, Meta. “We worked alongside Arm to develop the Arm AGI CPU to deploy an efficient compute platform that significantly improves our data centre performance density and supports a multi-generation roadmap for our evolving AI systems.”

Alongside Meta, Arm has confirmed additional commercial momentum with partners including Cerebras, Cloudflare, F5, OpenAI, Positron, Rebellions, SAP, and SK Telecom. These customers will deploy the Arm AGI CPU for key agentic CPU use-cases including accelerator management, control plane processing, and Cloud and enterprise-based API, task, and application hosting.

To accelerate this ramp, Arm is partnering with lead OEMs and ODMs including ASRock Rack, Lenovo, Quanta Computer, and Supermicro, with early systems available now and broader availability expected in the second half of the year.

More than 50 companies across hyperscale, Cloud, silicon, memory, networking, software, system design, and manufacturing are supporting the expansion of the Arm compute platform into silicon. That momentum includes industry leaders such as AWS, Broadcom, Google, Marvell, Micron, Microsoft, NVIDIA, Samsung, SK hynix and TSMC, alongside many others.