Growth in the largest frontier AI models has slowed sharply, while “small” and midsized models are expanding in both capability and adoption, according to new research from Omdia.

Omdia’s latest analysis, ‘AI Model Trends Spring 2026: State of the Art AI Models and their Compute Demands’, shows that parameter growth in frontier models has only been growing around 5% annually since 2021, compared with an expansion of more than a factor of 100 between 2019 and 2021. At the same time, the definition of “small” models is shifting rapidly, with 7B-14B parameter models increasingly replacing 100M-class models, alongside a fast-emerging midsized open-source category gaining momentum across development, sentiment, and adoption.

“In previous years, sustained slowdowns in AI model growth were typically associated with AI winters such as the 1980s, when the field faced systemic challenges. That is clearly not the case today, so something else is driving this shift,” said Alexander Harrowell, Senior Principal Analyst for Advanced Computing at Omdia. “We believe much of this is linked to the rise of agents. Modern AI systems are increasingly deriving performance from tool use, effectively trading relatively inexpensive CPU compute for more costly GPU resources. As a result, the CPU:GPU ratio is likely to move closer to 1:1.”

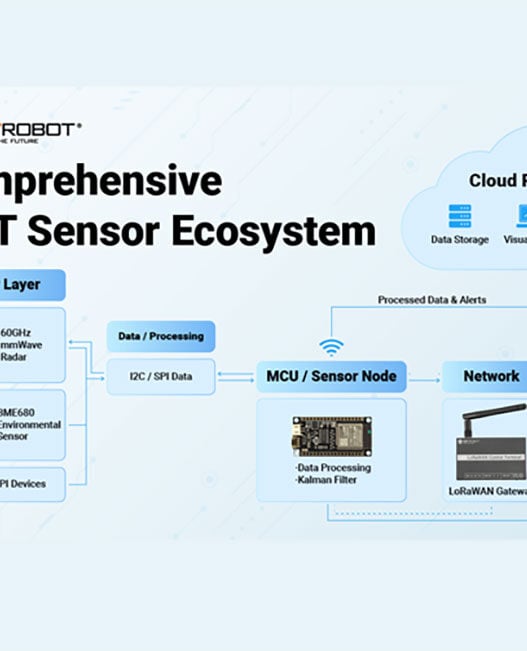

Agents are also driving demand for increasingly long context windows. As all inputs, interactions, and tool communications pass through the context window, extended context capacity is becoming critical. Managing context offload is therefore increasingly important, with a new cache hierarchy spanning memory and fast storage emerging to support these workloads. However, this diversification across AI models on GPUs, agents on CPUs, and context offload is expected to place growing pressure on data centre networks.

Midsized models are gaining traction due to their role as agent coordinators in systems such as OpenClaw, as well as their increasing multimodal capabilities.

“There is also growing interest in mid-range GPUs such as NVIDIA’s B40, both for these models and for the decode side of disaggregated inference architectures,” said Harrowell. “However, the key competitive challenge for any new AI chip remains last year’s flagship GPU. Older GPUs are retaining value and remaining in service, as they continue to offer a cost-effective option for small and midsized model inference and disaggregation.”