The data centre industry is undergoing a period of profound change. Driven by the insatiable demands of artificial intelligence, operators, chip designers, and power engineers are grappling with challenges that are rewriting the rules of infrastructure planning.

In a recent Electronic Specifier webinar, two experts at the sharp end of this transformation sat down to discuss the technologies, trade-offs, and tensions shaping the next generation of data centres.

Evolution or revolution?

The conversation opened with a simple question: are we witnessing an evolution of the data centre industry, or something more fundamental? The answers revealed just how differently the challenge looks depending on where you sit in the stack.

Bob Downing, VP of Sales – Energy Solutions at AVK, whose career began as a UPS engineer and now spans the full power delivery chain, sees continuity beneath the disruption. “If you look back at the start of my career, you would have a room 10 by five metres, and you would have a whole power architecture just to support that room,” he said. “Now we’re looking at vast data halls, and the power train can be as big as the data hall to deliver the power required for the system.”

Satadal Bhattacharjee, Global Head of Cloud and AI Infrastructure Silicon at Arm, who has built processors at Broadcom, Qualcomm, AWS, and now Arm, took a more structural view. In his assessment, AI hasn’t simply turned up the dial on existing trends – it has changed the fundamental unit of analysis. “AI is compressing what used to be separate conversations around compute, storage, cooling, and energy into a system-level problem,” he said. “The industry is moving from optimising individual server components to optimising rack and campus-level efficiency.”

The numbers tell the story plainly. Rack power densities that once topped out at 30kW are now pushing past 200kW. What was once the preserve of high-performance computing – tightly integrated compute, networking and storage, all engineered as a system – is now the standard expectation for AI workloads.

Power management: a new metric for a new era

For decades, Power Usage Effectiveness (PUE) has been the headline benchmark for data centre energy efficiency. But as workload profiles grow more complex and dynamic, its limitations are becoming apparent.

Downing described the difficulty of applying a traditionally static metric to systems characterised by volatile, bursty demand. “It’s very difficult now with cyclic loading and burst loads to identify PUE and how the system reacts to sudden demands,” he said. “Until you get some real fundamental information from case studies, it’s hard to calculate PUE effectively across these types of systems.”

Bhattacharjee agreed that the industry’s focus is shifting toward metrics that capture productive output rather than just minimising loss. “Data centre operators are gravitating towards compute which is more energy efficient, so that they can pack more within the fixed rack power,” he explained. The metrics that matter now, in his view, are performance per watt, workload throughput per rack, and – increasingly – what he termed “tokenomics efficiency”: how much useful AI output can be extracted from a given unit of energy.

“Power is premium across the world,” he added simply.

If smarter power management is the goal, how close is the industry to real-time, AI-driven orchestration? Here, both speakers struck a note of caution.

From the power infrastructure side, Downing pointed to the fundamental constraints of electrical engineering. “We’re quite a way off,” he admitted. “We have to design the system to deal with the highest expectation, and that means putting in components – copper, iron, batteries – that can deal with the highest transient. We don’t truly understand the beast we’re dealing with at the moment.”

Bhattacharjee acknowledged the problem but offered a more granular picture of the progress being made at the chip and cluster level. Intelligent power management, he argued, has moved decisively beyond the individual rack. “It has to be meaningful across the cluster. The marginal savings in isolation don’t matter that much.” He pointed to specific techniques already being deployed – such as dynamically powering down GPUs when CPUs are doing orchestration work, and vice versa – as early demonstrations of what system-wide optimisation could look like at scale.

The shift from training to inference – and the rise of Agentic AI

One of the most significant transitions now underway is the shift in the dominant AI workload type: from training to inference, and increasingly toward Agentic AI.

Bhattacharjee described the training era as one characterised by raw consumption. “The initial wave was basically to take as much data as possible and use as much compute as possible,” he said. But with foundation models now mature enough for real-world deployment, the economics are changing. “You train once and you infer all the time, and you cannot consume similar power and cost dynamics with inference as you had on training.”

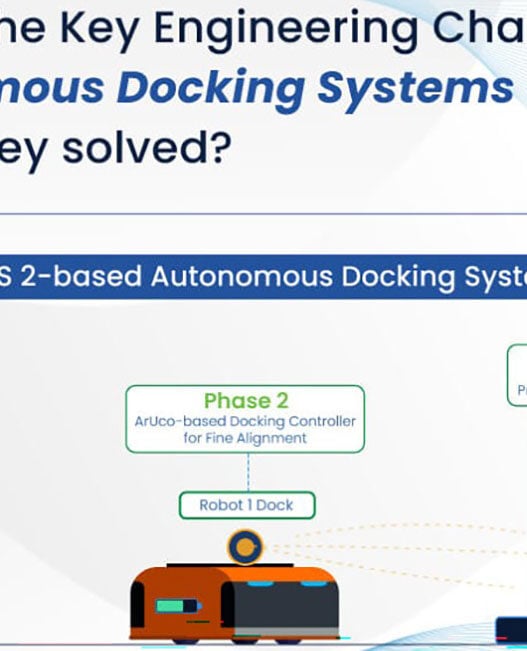

The emergence of Agentic AI – systems that don’t just answer questions but take actions on behalf of users – adds another layer of complexity. The infrastructure implications are significant. Agentic workloads place far greater demands on CPUs for orchestration, tool use, and continuous retrieval meaning that CPU clusters are now being deployed alongside accelerator racks in paired “pods.” Far from being sidelined by the GPU era, general-purpose compute is back at the centre of data centre planning.

“A lot of people thought CPUs were not going to play a critical role in AI,” Bhattacharjee noted. “But with inference, and especially Agentic AI, CPUs are playing a very, very critical role.”

Grid constraints and the power generation challenge

However efficient the chips, the data centre industry still needs power at scale – and getting it is proving to be one of the most significant bottlenecks to growth, particularly in Europe.

Downing described AVK’s work delivering island microgrids that operate without any connection to the public network, a response to the reality that grid infrastructure in many parts of Europe simply cannot meet current demand. “Operators are requesting connections all across Europe, and Europe just doesn’t have the power – or might not have the transmission and distribution network,” he said.

The challenge is compounded by regulatory complexity. Environmental obligations, net zero commitments, and fossil fuel restrictions all constrain the options for rapid power generation deployment. The more predictable and characterised the compute load – something that better chip efficiency and CPU-centric inference workloads could help deliver – the better operators can design appropriate power infrastructure.

AVK’s approach is technology-agnostic, drawing on turbines, fuel cells, engines, and mixed equipment sets depending on the requirements. But Downing was clear about what ultimately matters. “The emission efficiency for power generation is one of the key gatekeepers for building a micro grid. It has to be as efficient as humanly possible.”

Arm’s AGI CPU: more choice for data centre operators

On the silicon side, one of the most significant recent developments has been Arm’s launch of its own AGI CPU – the company’s first move into designing its own silicon.

Bhattacharjee explained the significance in straightforward terms. Previously, Arm’s highest-performance data centre CPUs were only available through the hyperscalers that built them. European Cloud providers and regional operators wanting equivalent performance had nowhere to turn. “With Arm now launching the AGI CPU, it’s possible for the rest of the industry to adopt and have more choices.”

The design philosophy centres on a metric that resonates throughout data centre planning: performance per watt. “Data centre operators are not looking for a different logo on the CPU. What they are looking for is what is the useful compute they can get within fixed power, cooling, and space limits,” Bhattacharjee said. Arm’s analysis suggests the AGI CPU delivers more than double the performance per rack compared to existing alternatives, consuming around 150 to 200W less than comparable x86 processors for equivalent workloads.

Chiplet architectures and the pace of innovation

The discussion of processor design led naturally to chiplet architectures – modular chip designs that are now standard across modern high-performance processors, including the AGI CPU itself.

Bhattacharjee outlined the advantages: faster time to market, better manufacturing yield, and the flexibility to mix components optimised for different workloads. “With the chiplet architecture, you can design chiplets for a different workload mix,” he said. “The question now shifts to: what is the best composition that will give you the best result for the workload characteristics you’re addressing?”

The accelerating pace of iteration is itself a challenge. What used to be a three-year CPU development cycle has compressed to annual refreshes – a pace driven by the rapid evolution of AI workloads. This raises important questions about standardisation and interoperability. “You can’t have five different companies develop five different chiplets and when you try to put them together, it’s mayhem,” Bhattacharjee observed. “The standardisation and software compatibility matter a lot.”

Cooling: navigating the transition

As rack power densities climb past 100kW, cooling has become one of the most consequential technical and operational challenges in the industry. Air cooling, which has served the industry adequately for decades, is approaching its limits at the densities that AI workloads demand.

Bhattacharjee described the reality facing many European operators: brownfield data centres built around 7-30kW air-cooled racks that they would like to extend for AI inference workloads without the capital expenditure of a full liquid cooling retrofit. Arm is working with partners to develop inference solutions capable of running within those existing constraints.

For new greenfield builds, the calculus is different – and more uncertain. Downing described the challenges of testing liquid-cooled systems under active load, and the risk of designing power infrastructure around load profiles that are still poorly understood. “UPS systems with lithium-ion batteries could be looking at significant degradation every three to four years because of the way the load cycles,” he warned. “The electrical and mechanical world is much slower moving than the processing world.”

The regulatory landscape and sovereign AI

The webinar’s closing exchanges turned to the broader political and regulatory context – and the growing interest among governments in data centres as critical national infrastructure.

Both speakers agreed that the desire for digital sovereignty is real and accelerating, particularly in Europe. But Downing was candid about the mismatch between the pace of technology and the pace of policy. “We’re expecting governments and regulators to move really fast,” he said. “They’re not used to doing that. They need policies developed for a decade, not just a parliamentary term, otherwise it’s going to stall.”

Bhattacharjee noted that the trend toward sovereign data infrastructure is increasingly a question of how, not whether. “This is no longer a question of whether to go sovereign or not. It’s all about how fast it can be built out.” Countries with natural advantages – particularly the Nordic region, with its cool climate and access to green energy – are emerging as increasingly attractive locations for new builds.

You can watch the webinar on-demand below: