A fundamental constraint on achieving full autonomy, Level-5 (L5), is the ability to rapidly process enough data both in training artificial intelligence/machine learning (AI/ML) systems, and during inference in-vehicle.

In training, scaling data centre-based systems to provide the necessary bandwidth and capacity comes with its own set of challenges given the exponential growth in the size and complexity of training models. Developing compute-intensive, high bandwidth systems for in-vehicle inference is constrained by cost, space and long-term reliability requirements that are not shared in the data centre use case. So while both training and inference are AI/ML applications, each brings its own set of criteria for choice of memory.

For inference in a vehicle, whether employing mainstream CPUs, GPUs or any of the growing number of AI/ML specific platforms, delivering the real-time inference needed for full autonomy will require significant optimisation of the memory processor interfacing to maintain the necessary data throughput.

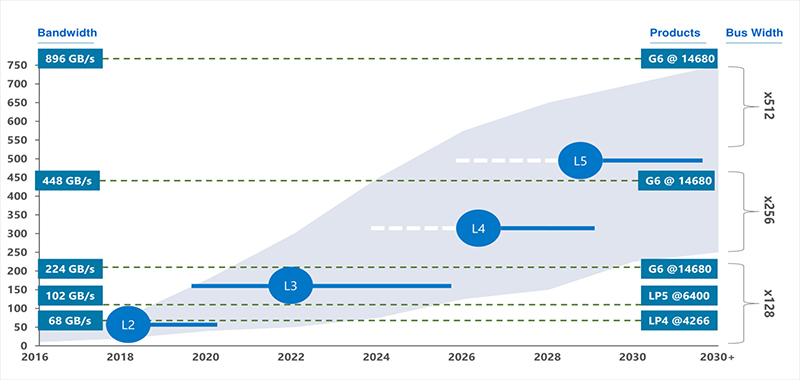

Figure 1 shows how predicted bandwidth demands rise significantly as ADAS capability increases. The size and complexity of inference models grows significantly with each ADAS level, increasing the bandwidth required. A current L2 ADAS system is processing around 60 Gigabytes per second (GB/s). Moving to L3 ADAS, with inputs from a sophisticated sensor suite, the system realistically needs memory bandwidth of at least 200GB/s. Below we explore memory options for meeting the requirements of L3 ADAS.

Above: Figure 1. Memory Bandwidth Requirements By ADAS Level

Memory choices

There is not a more demanding ‘IoT’ AI-inference application than ADAS. Qualification standards in a system responsible for protecting life and property are necessarily high. The net result is that road-tested memory architectures, such as DDR4 commonly used in laptops and desktop systems, LPDDR with billions of mobile phone deployments and GDDR6 deployed in hundreds of millions of graphics cards and game consoles, have seen implementation in early ADAS systems. Each has its advantages and presents design trade-offs.

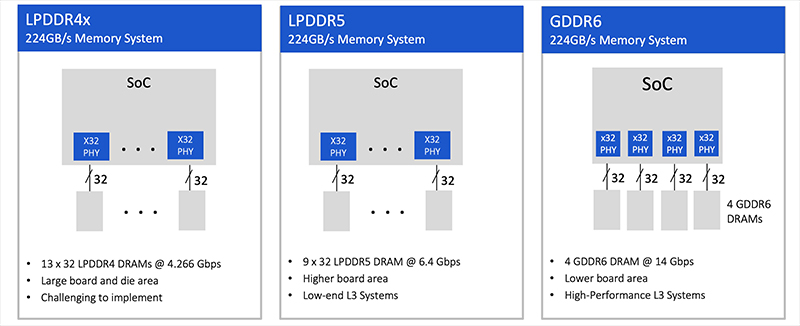

Memory implementation at L3 ADAS, and beyond

Figure 2 provides illustrative examples of memory systems capable of delivering 224GB/s, which meets the minimum bandwidth threshold of 200GB/s for a realistic L3 implementation. With LPDDR4, 13 DRAM devices are required, demanding a large board and die area which could be challenging to implement. Selecting LPDDR5 simplifies the system, requiring nine DRAM devices to deliver 224GB/s, possibly a pragmatic choice for low end L3 ADAS. For this use case, DDR4 is not practical since it would require 35 devices, at 6.4GB/s per device, to achieve the required bandwidth.

Looking down the road to L4 ADAS, bandwidth requirements rise to 300GB/s, where with an LPDDR5 interface running at 6.4Gbps, 12 DRAM devices would be required to hit that mark. At this point the ‘beachfront’ of the SoC would be dominated by memory interfaces which would be impractical and complicate the logic layout of the SoC.

Left: Figure 2. L3 ADAS Memory System Implementation Examples

GDDR6 is far more efficient than LPDDR or DDR4 in terms of design and space utilisation. It requires only four DRAM devices to deliver 224Gbps, less than half the package count of the alternatives. As bandwidth requirements increase to serve the inference demands at higher ADAS levels, GDDR6 becomes the only viable alternative. GDDR6 running at 16Gbps can provide over 300GB/s of bandwidth with only five DRAM devices and hit the greater than 500GB/s needed for L5 ADAS with eight DRAM.

The excellent performance characteristics of GDDR6 memory, built on tried-and-tested manufacturing processes, make it an ideal memory solution for AI inference. Its price performance characteristics make it suitable for volume deployment.

Designing with GDDR6

The principal challenge for GDDR6 implementations is rooted in one of its strongest features: its speed. Maintaining signal integrity (SI) at 16Gbps with a 1.35V operating voltage, requires significant expertise at the boundary of the analogue and digital worlds. Interdependencies between the behaviour of the interface, package and board require a method of co-design of these components in order to maintain the SI of the system. Independent design of these elements is a path to implementation problems and redesign.

Fortunately, there are silicon-proven solutions available for GDDR6 capable of supporting the high bandwidth, low-latency requirements of ADAS inferencing. Acquiring a co-verified PHY and digital controller as a complete GDDR6 memory subsystem, together with support to optimise full-system signal and power integrity (SI/PI) and chip layout, accelerates and de-risks design.

Conclusion

GDDR6 is a strong fit for ADAS applications going forward. It offers a great combination of bandwidth, capacity, power efficiency, reliability and price performance.

With the latest verified GDDR6 Interface designs demonstrating performance at 18Gbps, offering design headroom, and delivering full support through characterisation and debug, ADAS designers can confidently select GDDR6 for their next generations of design.