In the animal kingdom, having an attuned sense of touch is an incredible feat of biological engineering. Cats’ paws can detect vibrations, temperature, and texture, while elephant trunks are sensitive enough to sense pressure differences at a depth of just 0.25mm. These natural sensors have evolved over millennia to perform an incredible array of tasks both with precision and dexterity.

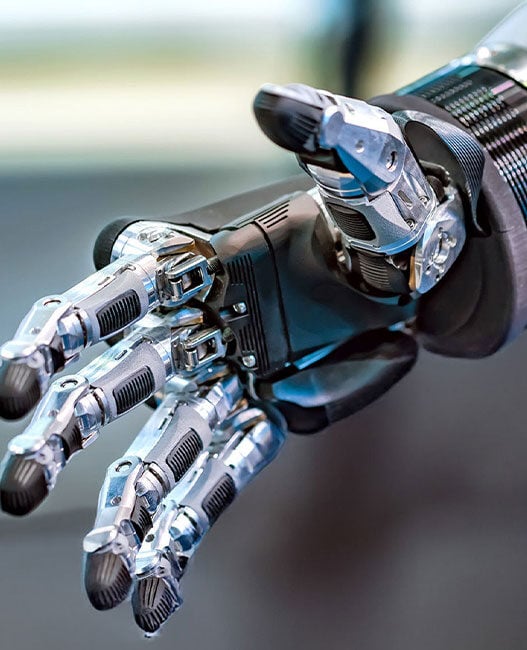

Replicating even a fraction of that capability in tactile robots has proven enormously difficult. Tactile robots typically use dense arrays of sensors, often in robotic hands, to detect pressure and texture. But where nature has produced a variety of shapes and forms for these sensory receptors, most robotic sensors today are still designed as “flat blocks or hemispheres”, constrained by the human model of a fingertip. “While a great technological step, you can’t pick up a piece of paper with just the tip of your finger,” says Dr Shan Luo, Reader in Robotics and AI at King’s College London.

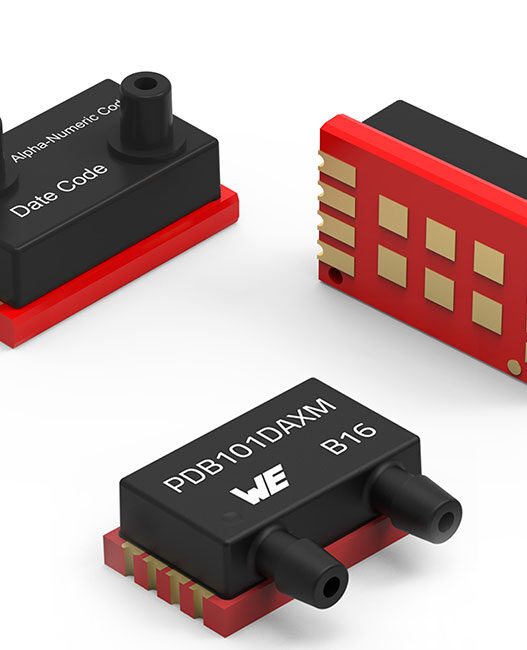

If we look past the design constraints, the process of actually building and training tactile robots is inefficient. Researchers have had to prototype, test, and train each device physically. This method is reliant on trial and error, and it can take up to 18 months to produce a single working prototype, with no guarantee of success. High-accuracy force and torque sensors can cost upwards of £10,000, and training a full prototype at scale requires many sensors of different kinds, pushing costs to near-exponential levels.

Now, researchers at King’s College London believe they have a solution. By combining a new physics-based simulation platform called SimTac with an AI model called GenForce, the team has developed an method that could reduce design and training time from 18 months to just two weeks – while dramatically cutting costs in the process.

Taking nature into the digital world

SimTac, published in ‘Cyborg & Bionic Systems’, is described as a simulator for biomimetic tactile sensors – sensors designed to mimic how biological touch organs deform, sense contact, and respond to pressure. Rather than building and testing sensors in the physical world, researchers can now iterate on designs in a virtual environment, testing complex, nature-inspired shapes at a fraction of the time and cost.

“By embracing all of these different kinds of sensors in a virtual environment like SimTac, we can iterate on the design space to create lots of different, more helpful kinds of sensors,” says Dr Luo. The platform supports a wide range of biomorphic shapes as well as diverse materials and optical configurations.

Xuyang Zhang, PhD student at King’s and first author of the SimTac paper, explained the significance of moving beyond the flat-surface paradigm: “Imagine trying to pick up a piece of paper on a table using only your finger pad – it’s almost impossible. Our work has learnt from the best of nature to create an abundance of prototypes and models capable of different tasks. It’s a powerful expansion of the design space and can be used to create physical tactile robots in a fraction of the time.”

Yet, the high costs associated with physical sensor development have discouraged teams from experimenting further than familiar designs. However, a verifiable digital twin environment like SimTac changes that. “A verifiable twinned training environment and a cost reduction in training models mean our approach can help break these constraints and encourage researchers to make better tactile robots,” says Dr Luo.

Teaching robots to remember touch

While SimTac addresses the design and simulation challenge, a second bottleneck is the cost and complexity of training a complete tactile robot to interpret and respond to what it feels. This is where GenForce comes in.

The human sense of touch is not just about sensation – it is also about memory and transfer. When a person grasps a strawberry versus a baseball bat, the brain draws on accumulated tactile experience to apply exactly the right amount of force, explains Dr Luo. Robots lack this capacity inherently, and training them to acquire it has historically required extensive physical testing across every sensor type in a device.

GenForce is an AI model designed to replicate this process of tactile memory. By unifying different types of tactile sensors – whether they use cameras, magnetic fields, or other mechanisms – into a single framework, it allows an entire robotic hand to be calibrated by training just one sensor. “In a sense, this means you can train an entire hand how to sense and move by using just one finger,” says Zhuo Chen, PhD student at King’s and first author of GenForce.

Combining multiple sensor types in a single device is well established as a way to achieve higher dexterity, but doing so has always carried a steep training cost. GenForce targets this directly by reducing the number of sensors needed to train a full prototype, meaning that it could deliver huge savings for manufacturers and robotics developers working at scale.

A new blueprint for tactile robotics

Together, SimTac and GenForce provide a unified front to change how tactile robots can be conceived and built, meaning that the traditional pipeline of physical prototyping, trial-and-error testing, expensive sensor arrays, and months of training can now be replaced by a digital-first approach that is faster, cheaper, and open to a far wider range of designs.

“With the help of SimTac, we can rapidly validate sensor design concepts, significantly shortening development cycles,” says Dr Luo. “In the future, robots might truly emulate living organisms, perceiving the world through their own specialised ‘skin’.”

By reducing the time and cost of bringing new designs to life, the King’s research could open that landscape to a much wider group of innovators.

“The most exciting prospect of GenForce is its ability to treat diverse tactile sensors as a single, unified system – just like human skin,” adds Dr Luo. “As we look toward a future where robots are covered entirely in tactile skin, individually calibrating thousands of sensors will become practically impossible. By replicating this holistic cognitive process, GenForce draws the ultimate blueprint for the future of whole-body tactile robots.”