Data centres have gone from “Internet hotels” to AI factories in a little over two decades – but the inflection point we’re entering now is unlike anything the industry has seen before. Speaking at Tech Show London, Lee Prescott, CTO at Red Engineering Design, laid out a blunt challenge to the industry: the way we design, power, and cool data centres must fundamentally change to keep up with GPU driven AI.

For engineers, his message is clear: think less like traditional facilities designers and more like factory systems engineers optimising a production line.

“If it’s an AI facility, it’s all about tokens, the currency of AI,” Prescott explained. “Power is our input, and tokens are the output. Start thinking like a factory.”

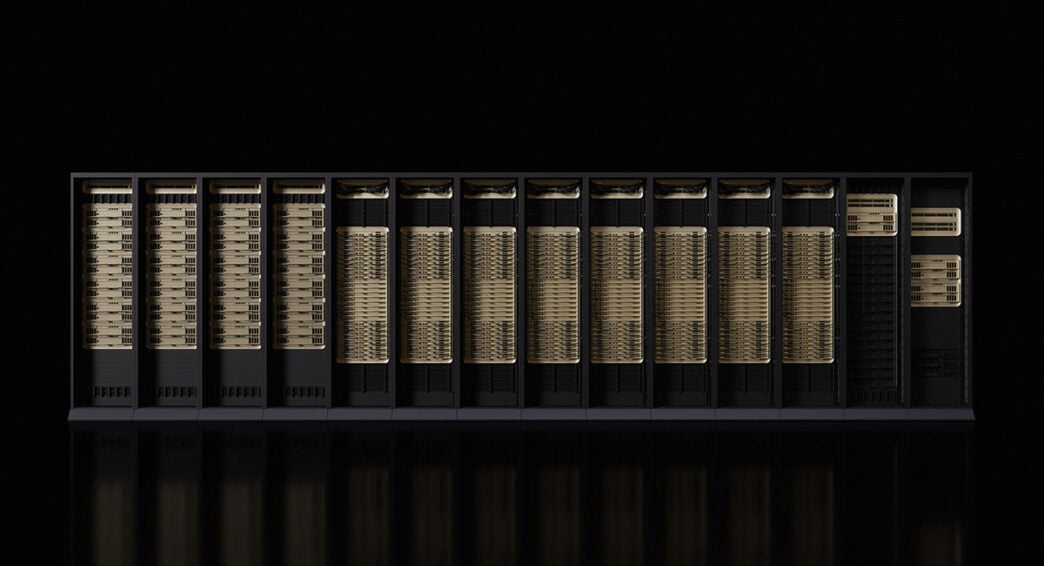

From 10 to 600kW and beyond

Traditional enterprise and early Cloud data centres were already pushing boundaries, but the arrival of large scale AI has blown up the scale:

- Historically, typical racks sat in the single digit to low tens of kilowatts

- With large GPU clusters, Prescott notes we quickly moved into 50-100kW per rack territory

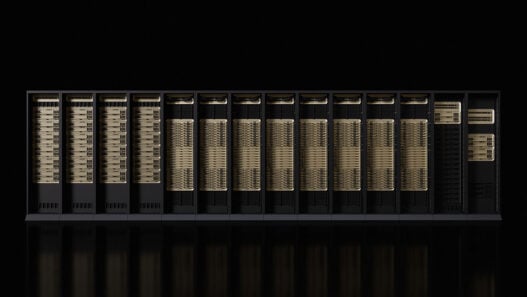

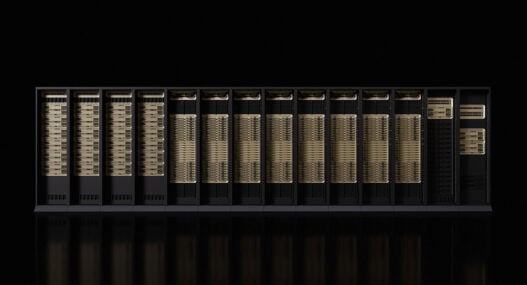

- NVIDIA’s Blackwell generation pushes toward ~130kW per rack

- Rubin, arriving from Q3 this year, is expected in the 220-250kW per rack range

- Rubin Ultra, following on, targets 600kW+ per rack

Those aren’t just bigger numbers – they fundamentally reshape power distribution architectures, cooling strategies, busbar design, protection coordination, and failure modes that engineers must now grapple with.

Prescott underscores the scale from a compute perspective: Blackwell clusters deliver on the order of 1.1 exaFLOPS per rack, while Rubin moves to around 3.6 exaFLOPS per rack. As he put it: “These are huge, huge amounts of power being consumed and heat being rejected.”

Cooling: borrowing from F1 to solve a two temperature problem

AI doesn’t just increase power density; it splits the cooling problem in two. On the one hand, you still have traditional air cooled IT requiring relatively cool supply air and chilled water around ~23°C to optimise chiller performance. On the other hand, direct to chip liquid cooling for GPUs can accept much warmer water – NVIDIA has indicated Rubin racks will run with incoming water in the 45-53°C range.

This creates a control and systems integration puzzle:

- Air cooled/Cloud/hybrid loads want lower temperature chilled water

- AI direct to chip liquid loads want higher temperature water to maximise free cooling hours and efficiency

You can build two completely separate cooling systems, Prescott notes, but that locks in high capex and rigidity. Instead, Red has been exploring a flexible, manifold based approach with the ability to segment and recombine cooling loops as needed.

“We must have an optimised system for air cool and an optimised system for liquid cool, flexibility to change the ratio, and adapt to market needs … and be concurrently maintainable without excessive capex.”

Taking inspiration from Formula 1’s adaptive aerodynamics, the idea is to build a cooling backbone that can “reconfigure itself” operationally:

- Shared chiller plant feeding separate manifolds for warm and cold loops

- Automatic isolation valves and actuator driven control to split or recombine circuits

- Ability to run one side as a hot loop (e.g., 45°C+ for CDUs) in almost full free cooling mode in many European climates

- Ability to keep a cold loop for air cooled or legacy loads, still benefiting from partial free cooling but accepting some compressor runtime

Power: every watt must become tokens

Cooling is only half the challenge. Prescott argues that future AI data centres must be designed with a hard constraint mindset on power. Grid capacity and connection lead times are tightening worldwide; what you can draw from the utility is effectively your maximum revenue envelope.

“The future will be power‑constrained … really, power is revenue. We want to take all of our power from the utility and convert it into revenue, make tokens with it. You don’t waste any watts.”

In practical terms:

- PUE (Power Usage Effectiveness) is no longer just an efficiency metric; it directly maps to tokens per megawatt

- The gap between average PUE and peak‑day PUE often hides “stranded” capacity – infrastructure reserved for the worst‑case hot day that sits under‑utilised most of the year

- By aggressively optimising cooling, power distribution and conversion, that gap can be narrowed, freeing up additional GPU capacity within the same grid allocation

DC distribution and superconducting cables

Looking beyond near‑term upgrades, Prescott highlighted two technologies that could materially change the power picture.

First, high‑capacity DC distribution within the data centre: “We’re going to be DC; the next one or two generations will be AC. Industry is not quite ready … but working on DC reduces conversions. Double conversions at UPS’ … really would be stepping down the voltage. So, you get a few percent back in doing that.”

Fewer conversions mean:

- Lower losses from AC/DC/AC chains

- Simpler power paths from utility or on‑site generation to rectifiers and ultimately to rack‑level DC buses

- Potential PUE improvements on the order of a couple of percentage points – which translates directly to more GPU capacity

Second, high‑temperature superconducting (HTS) cables. Once cooled to around -200°C, these materials exhibit near‑zero electrical resistance: “You’ve got zero loss … ten times smaller in conductor size compared to traditional AC busbar. It means you’ve got much less of a coordination problem as density increases.”

For large AI campuses, HTS could allow:

- Very high current densities in compact corridors

- Relocating power plant (generators, possibly chillers) hundreds of meters or more off‑site, reducing local impedance and thermal interaction with the data hall

- Further squeezing losses out of the end‑to‑end power path

From data centres to AI factories

Prescott’s closing message was a call for the industry to abandon incrementalism: “Move to a factory‑based way of thinking … Always make as many tokens as possible. Don’t leave power stranded. Optimise your infrastructure. Don’t play to the lowest common denominator.”

For engineers, that means:

- Designing power and cooling as a coupled optimisation problem, not separate silos

- Embracing warmer liquid‑cooling regimes and challenging legacy SLAs that block efficiency gains

- Pushing vendors for better CDUs, plate heat exchangers, rectifiers, DC gear, and bus systems that support extreme density

- Treating every watt from the grid as potential AI output – and designing the system so that as little of it as possible is lost along the way

Rubin and its successors are coming fast. The question Prescott leaves hanging is not whether the silicon will be ready, but whether the infrastructure engineered around it will be.