According to industry professionals, there are key challenges that must be overcome in which ethical and moral aspects will play a pivotal role.

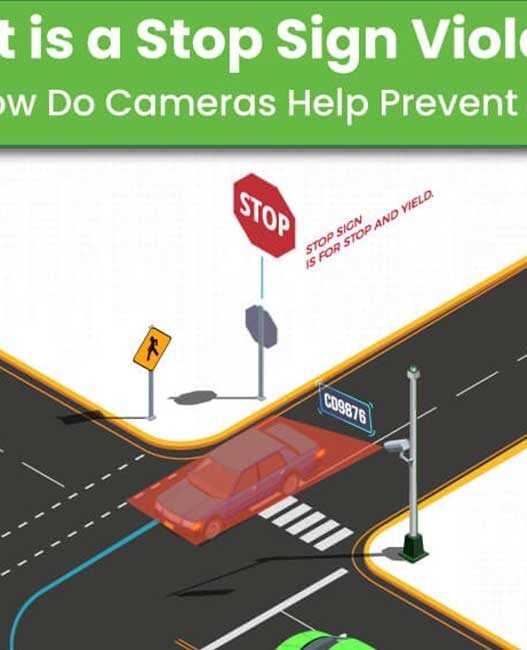

An autonomous vehicle is one that can operate itself without human involvement, through ability to sense and interpret its surroundings. Currently, The Society of Automotive Engineers (SAE) defines 6 levels of driving automation, ranging from Level 0 (fully manual) to Level 5 (fully autonomous). Autonomous vehicles primarily rely on artificial intelligence (AI), light detection and ranging (LIDAR), radio detection and ranging (RADAR), and cameras and sensors to sense and navigate by forming a 3D map of the environment.

Legal framework

A legal framework for autonomous driving is highly important, and therefore is a huge, challenging vital task. In 2018, the Law Commission of England and Wales and the Scottish Law Commission were asked by the Centre for Connected and Autonomous Vehicles (CCAV) to undertake a review to enable the safe and responsible introduction of automated vehicles on British roads and public areas.

On January 26, 2022, it published a joint report with the Scottish Law Commission, giving recommendations for legal reform. These recommendations keep safety at the forefront, whilst also retaining flexibility to accommodate future development.

Key recommendations include:

- Writing the test for self-driving into law, with a bright line distinguishing it from driver support features, a transparent process for setting a safety standard, and new offences to prevent misleading marketing.

- A two-stage approval and authorisation process building on current international and domestic technical vehicle approval schemes and adding a new second stage to authorise vehicles for use as self-driving on GB roads.

- A new in-use safety assurance scheme to provide regulatory oversight of automated vehicles throughout their lifetimes to ensure they continue to be safe and comply with road rules.

- New legal roles for users, manufacturers and service operators, with removal of criminal responsibility for the person in the passenger seat.

- Holding manufacturers and service operators criminally responsible for misrepresentation or non-disclosure of safety-relevant information.

UK, Scottish and Welsh governments will decide whether to accept the recommendations and introduce legislation to bring them into effect.

Ethical dilemma

There is an overall societal tendency to demand zero tolerance for errors regarding autonomous driving. With numerous examples of where autonomous vehicles have gone wrong, many ask the question; can a driverless car make the right decision in a hazardous situation?

In response to this, experts state that a self-driving car would not make its own decision in such a situation, but instead reflect the software choices its creators equipped it with. It will only assume the ethical decisions and values of the people who design it, applying them without its own interpretation.

The German Federal Ethics Commission began tackling the issue of ethics in 2017. Its first report set out initial guidelines and identified a demand for action and further development in the areas of technology and society. The report resulted in 20 ‘ethical rules for automated and connected vehicular traffic’.

One rule details that the primary purpose of automated and connected vehicles is to improve safety for all road users. Therefore, prevention of accidents is at the centre for further development of automated driving technology, and in hazardous situations, protecting human life is the priority.

The issue has also been taken up by The European Parliament who in 2018 launched ‘AI4People’, an initiative advancing ethical standards in artificial intelligence. The conclusion is clear: protecting human life is paramount. To abide by this, autonomous vehicles are only ethical justifiable if they lead to fewer injuries and fatalities compared with human driving.

Between 90 and 95% of road traffic accidents are a result of human error, therefore autonomous vehicles have the potential to reduce human error, and in turn, traffic accidents. The WHO also reports that worldwide, one person dies on the streets every 24 seconds. Based off this data, experts at autonomous giant Audi propose that with an autonomous future comes a new kind of reliability and road safety.

Going forward

To build public acceptance we must build a relationship based on trust, therefore transparency is key. A key aspect of this is education.

Demonstrating the advantages and personal benefits of self-driving cars, such as the time saved, increased comfort and safety benefits is a brilliant way to begin doing this. Creating an understanding of possible use cases and their own role by educating potential users and transparency in the process and in communications from manufacturers and software developers about where the technology is at can also achieve this.

Proving system safety for human beings and raising awareness of the economic, environmental and ethical added value for society is also beneficial.

Public acceptance of autonomous vehicles is essential for a society to reap the intended benefits such as reduced road traffic incidents, congestion, land usage and environmental pollution.