Imagine smart glasses that are smooth, sleek, practical, and intuitive. No accidental commands, false triggers, latency, or poor scalability from fragile capacitive sensing.

Ultrasound and multi-modal user interface pioneer, UltraSense, believes the bottleneck to achieving a great AR UX lies not in displays or optics, but in the silicon that sits just beneath the frame.

I spoke with Mo Maghsoudnia, Founder and CEO, UltraSense, about the company’s UltraTouch AR 2 chip and why he sees it as a turning point for scalable, reliable AR glasses design.

From smartphones to solid-state interfaces

Founded just over six years ago, UltraSense set out to address an emerging problem in smartphones: demand for thinner designs and curved ‘waterfall’ displays that enabled sleek operation, where bulky mechanical buttons were becoming a hindrance to advancement.

“What we have come up with, which is really the core technology for the company, is we have this ultrasound platform that we are using as a user interface for the next generation of devices,” Maghsoudnia explained.

Capacitive sensing is the dominant interface technology, and it works well on thin glass and plastics, but it struggles with metal.

“The only way that you can make a touch user interface through metal layers is ultrasound,” he continued.

Once UltraSense enabled the expansion of sensing capacity, interest grew, especially from the automotive sector and premium OEMs looking to replace mechanical switches with solid-state interfaces made from materials like metal, wood veneer, or stone veneer.

Overcoming UI weaknesses in AR glasses

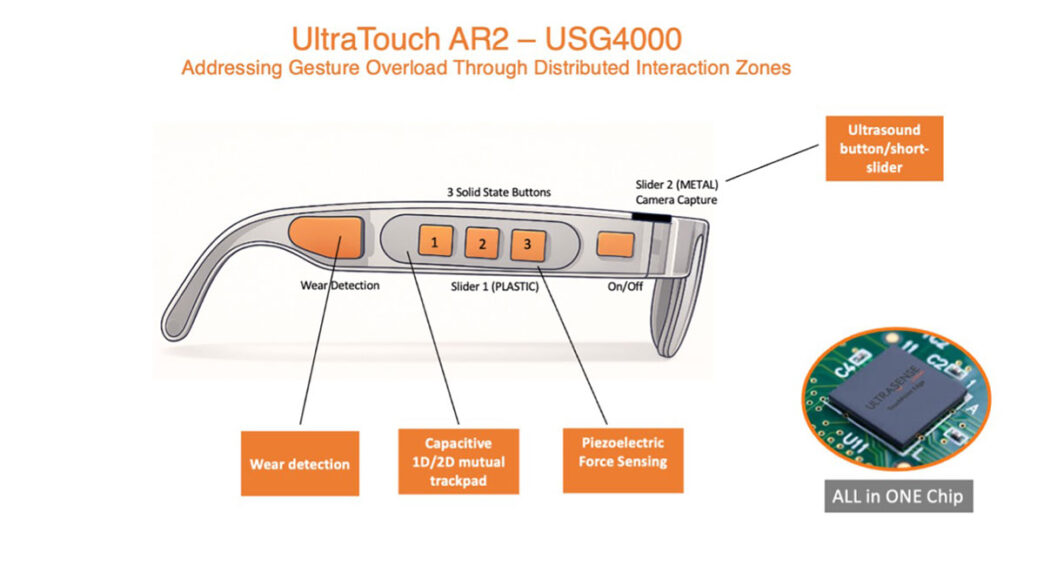

The capability of AR glasses is a challenge – devices are always on, battery-constrained, and limited to very small interaction surfaces. Early designs leaned heavily on capacitive touchpads mounted on the side of the frame, which often came in the form of a single narrow strip that was expected to handle every function. However, as the demand for more functionality grew, traditional methods became impractical. Not only this, but capacitive sensors are vulnerable to environmental conditions, a problem that is magnified when worn on the face.

“You get a lot of false triggering with capacitive. If your hair is wet … if you’re sweating. It causes extensive false triggering,” explained Maghsoudnia.

These issues may be tolerable at the prototype stage, but they become critical as volumes increase and devices move toward mass-market expectations.

Cap Force, Ultra Force, and multipoint interfaces

The company’s answer to these challenges lies in a blend of capacitive and force-based sensing, branded as ‘Cap Force’ and ‘Ultra Force’. By fusing these sensing modalities, they dramatically reduce false activations.

“The moisture could trigger one of the modalities, but not the other,” Maghsoudnia explained. “Therefore, we can filter out the false triggering that you see related to the environment.”

This fusion enables distributed interaction across the frame, rather than concentrated in a single strip. Virtual solid-state buttons can be placed in different locations, each mapped to specific functions such as volume, calls, display controls, or media playback. Wear detection is also built in, which allows the system to determine whether the glasses are actually on the user’s face.

For camera operation, where reliability and tactile feedback matter, ultrasound and force sensing enable shutter-style controls without using bulky mechanical buttons. The aim is to mirror the clarity of smartphone interaction, without overloading the user with hard-to-remember gestures.

The Ultra Touch AR 2 chip

The company’s flagship Ultra Touch AR 2 chip integrates sensing, sensor fusion, algorithms, and application software into one compact silicon chip. At around 3 x 1.5 x 0.2mm, it is extremely thin and energy efficient – a vital feature for wearable, always-on devices.

Many other systems rely on the main processor for sensor fusion, which can introduce latency and increase power consumption; however, UltraSense avoids this by treating the device as a dedicated HMI controller. Drawing on its smartphone background, Maghsoudnia stressed the importance of being able to wake a device straight out of the box, without first charging it.

Manufacturing without disruption

A big benefit is the minimal manufacturing impact for OEMs. The force-sensing layer can be added as an extra flexible printed circuit layer, under 100 microns thick, without altering the overall design. For metal frames, the use of piezoelectric films enables similar force and ultrasound capabilities, allowing both ultra-thin form factors and lower weights, another advantage over bulky plastic.

“You can actually go to a smaller weight on your glasses with metal frames that you cannot do with plastic,” said Maghsoudnia.

A UI built for scale

Maghsoudnia argues that AR glasses will only succeed if two conditions are met:

- The glasses must look like everyday eyewear

- They must be intuitive to use

The UltraTouch AR 2 chip is designed to address both conditions with a tightly integrated chip that can finally make complexity stylishly manageable.

By Sheryl Miles, Associate Editor

This article originally appeared in the January’26 magazine issue of Electronic Specifier Design – see ES’s Magazine Archives for more featured publications.