In Applied Physics Letters, published by AIP Publishing, researchers at the University of Wisconsin-Madison, Washington University in St. Louis, and OmniVision Technologies highlight the latest nanostructured components integrated on image sensor chips that are most likely to make the biggest impact in multimodal imaging.

The developments could enable autonomous vehicles to see around corners instead of just a straight line, biomedical imaging to detect abnormalities at different tissue depths, and telescopes to see through interstellar dust.

“Image sensors will gradually undergo a transition to become the ideal artificial eyes of machines,” co-author Yurui Qu, from the University of Wisconsin-Madison, said. “An evolution leveraging the remarkable achievement of existing imaging sensors is likely to generate more immediate impacts.”

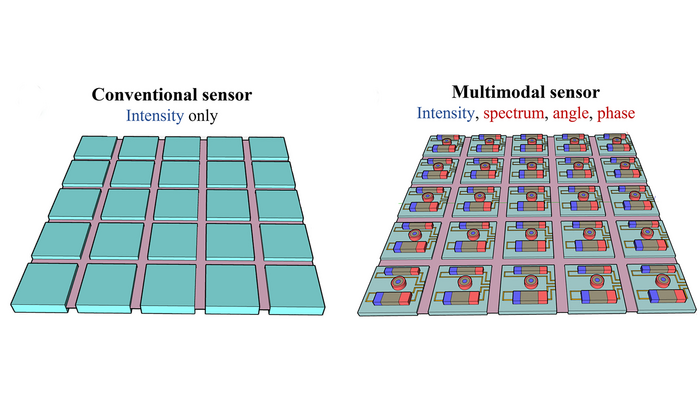

Image sensors, which converts light into electrical signals, are composed of millions of pixels on a single chip. The challenge is how to combine and miniaturise multifunctional components as part of the sensor.

In their own work, the researchers detailed a promising approach to detect multiple-band spectra by fabricating an on-chip spectrometer. They deposited photonic crystal filters made up of silicon directly on top of the pixels to create complex interactions between incident light and the sensor.

The schematics of (a) a conventional sensor that can detect only light intensity and (b) a nanostructured multimodal sensor, which can detect various qualities of light through the light-matter interactions at subwavelength scale. Credit: Yurui Qu and Soongyu Yi

The pixels beneath the films record the distribution of light energy, from which light spectral information can be inferred. The device – less than a hundredth of a square inch in size – is programmable to meet various dynamic ranges, resolution levels, and almost any spectral regime from visible to infrared.

The researchers built a component that detects angular information to measure depth and construct 3D shapes at subcellular scales. Their work was inspired by directional hearing sensors found in animals, like geckos, whose heads are too small to determine where sound is coming from in the same way humans and other animals can. Instead, they use coupled eardrums to measure the direction of sound within a size that is orders of magnitude smaller than the corresponding acoustic wavelength.

Similarly, pairs of silicon nanowires were constructed as resonators to support optical resonance. The optical energy stored in two resonators is sensitive to the incident angle. The wire closest to the light sends the strongest current. By comparing the strongest and weakest currents from both wires, the angle of the incoming light waves can be determined.

Millions of these nanowires can be placed on a one-square-millimetre chip. The research could support advances in lensless cameras, augmented reality, and robotic vision.