Dr. Dominic Williamson, a University of Sydney quantum physicist, has developed a new approach to quantum error correction that could significantly reduce the number of physical qubits required to build large-scale, fault-tolerant quantum computers.

Dr. Williamson undertook the work during an industry placement with the Quantum Information Theory and Error Correction group at IBM in California. Elements of the design have since been incorporated into IBM’s long-term roadmap for building large-scale fault-tolerant quantum computers, as detailed in this blog post from IBM. The study has been published in Nature Physics.

At the heart of the new approach is the application of gauge theory, which effectively allows a system to keep track of global activity – such as across a ‘quantum hard drive’ – without forcing specific quantum states to collapse at the locality of individual qubits in quantum computing.

“We’re at a point where theory and experiment are beginning to align,” Dr. Williamson said. “The big question now is how to design quantum computers that can be scaled efficiently to solve useful problems. Our work provides a promising blueprint.”

Transformative possibilities

Quantum computers promise transformative advances in multiple fields, able to do this by harnessing the ‘superposition’ and ‘destructive interference’ of matter in quantum states, creating new forms of computing pathways to solve problems beyond the scope of classical computers.

But this power comes at a cost. Quantum states are fragile, even the slightest interaction with the surrounding environment can collapse a superposition into a classical state, erasing the quantum advantage. Overcoming this fragility is one of the central challenges in building useful quantum technologies.

“Quantum computers perform calculations in a fundamentally different way to classical machines,” said Dr. Williamson. “But any unintended interaction with the environment can destroy the very quantum effects that give them their power.”

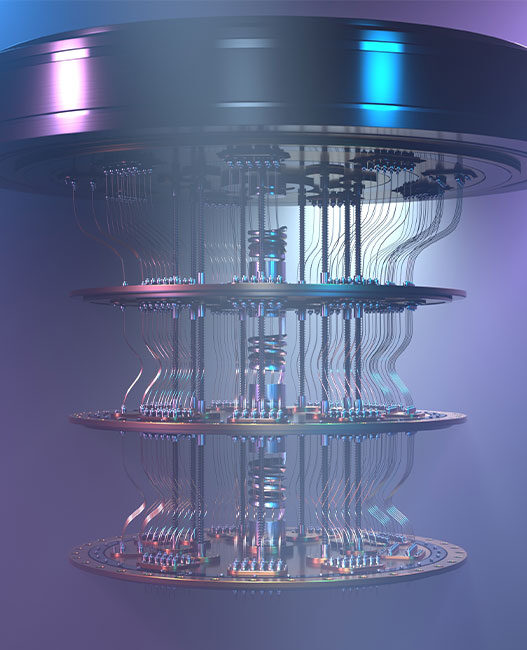

Quantum error correction addresses this challenge by redesigning how quantum information is stored and processed. Instead of protecting individual quantum bits, or qubits directly, information is encoded across many physical qubits in a way that allows errors to be detected and corrected without disturbing the computation.

A qubit is comparable to a transistor in classical computing, acting as a ‘switch’. Whereas such switches are either on or off in classical systems, a qubit in quantum computing exists in a possibility of outcomes, or a superposition, allowing for fundamentally different types of computing algorithms.

The difficulty with storing information across qubits is the overhead: the number of additional qubits and operations required to protect the information. Historically, this overhead has grown faster than the size of the computation itself, making large-scale machines impractical.

Recent theoretical breakthroughs have changed that picture, introducing designs for ‘quantum hard drives’ where the cost of storing quantum information grows only in proportion to the amount of information being stored.

Dr. Williamson’s new work is tackling the next major challenge: how to perform logical processing on this efficiently stored quantum information without losing those efficiency gains.

The gauge theory

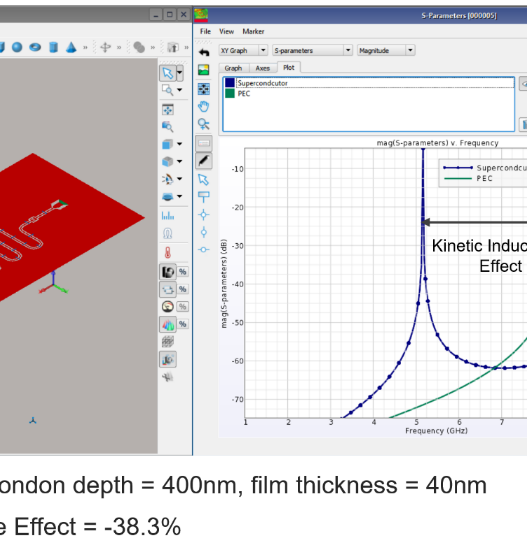

The research draws inspiration from the lattice gauge theory in physics: a framework reconciling local interactions with global conservation laws.

Dr. Williamson explained: “Gauge theory introduces additional degrees of freedom that track global properties without forcing the system into a definite local state. We realised a similar idea could be used to process logical quantum information.

“A gauge is just a mathematical construct that provides a set of local coordinates for any defined system we are studying. What is useful for us is that gauge theory allows for transformations of the coordinate system at the local level, while physically significant global properties of the system remain invariant.

“This is an idea deeply integrated into our understanding of the Standard Model of particle physics and the field theory underlying it. We took this idea, and have applied it to quantum computers, offering an efficient pathway to reduce errors while using up less precious computing power.”

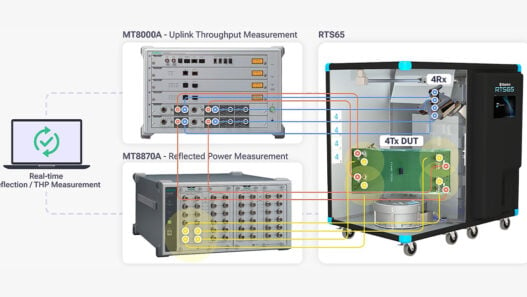

In the new design, a logical processor system is paired to an efficient quantum memory. Synthetic ‘gauge-like’ degrees of freedom are introduced to measure global logical information without locally collapsing the encoded quantum state. These components are arranged using highly connected mathematical structures known as expander graphs, enabling efficient scaling.

The result is a flexible architecture for error-corrected quantum computation that preserves the efficiency of next-generation quantum memory designs while adding processing capability.

The implications, if the approach proves out, extend well beyond computing. Quantum machines capable of running fault-tolerant calculations at scale are expected to transform fields including cryptography, drug discovery, materials science and climate modelling – problems that remain beyond the reach of even the most powerful classical computers.