Maia 200 is Microsoft’s latest custom AI inference accelerator, designed to address the growing performance, efficiency, and scalability requirements of large language models and other Generative AI workloads.

The accelerator targets token-generation-heavy inference scenarios and forms part of Microsoft’s broader heterogeneous compute strategy across Azure. Its design emphasises low-precision compute, high-bandwidth memory, and system-level scalability using standard networking technologies.

Process technology and compute architecture

Maia 200 is manufactured on TSMC’s 3nm process and integrates more than 140 billion transistors per chip. The accelerator is optimised for low-precision inference, with native support for FP8 and FP4 tensor operations. This aligns with industry trends toward reduced numerical precision to improve throughput and efficiency for large-scale AI workloads.

Per chip, Maia 200 delivers more than 10 petaFLOPS of FP4 performance and over 5 petaFLOPS of FP8 performance within a 750W SoC thermal design envelope. The design targets large, production-scale models, with sufficient headroom for future increases in model size and complexity.

Memory architecture and bandwidth

To address memory bandwidth and data movement bottlenecks common in inference workloads, Maia 200 incorporates a redesigned memory subsystem centred on narrow-precision data types and high-bandwidth access paths.

Each accelerator includes:

- 216GB of HBM3e memory

- Approximately 7TB/s of memory bandwidth

- 272MB of on-chip SRAM

This memory hierarchy is supported by specialised DMA engines and a dedicated on-die network-on-chip, enabling efficient movement of weights and activations. The design prioritises sustained token throughput and high utilisation of compute resources rather than peak FLOPS alone.

Performance and cost efficiency positioning

Microsoft positions Maia 200 as its most efficient inference accelerator to date, citing approximately 30% better performance per dollar compared with the latest generation of inference hardware currently deployed across its infrastructure. In comparative terms, Microsoft reports FP4 performance that exceeds third-generation Amazon Trainium by a factor of three, and FP8 performance that surpasses Google’s seventh-generation TPU.

While cross-vendor comparisons depend on workload characteristics and software maturity, these figures suggest Maia 200 is competitive with other hyperscaler-designed accelerators in the low-precision inference space.

System-level scaling and networking

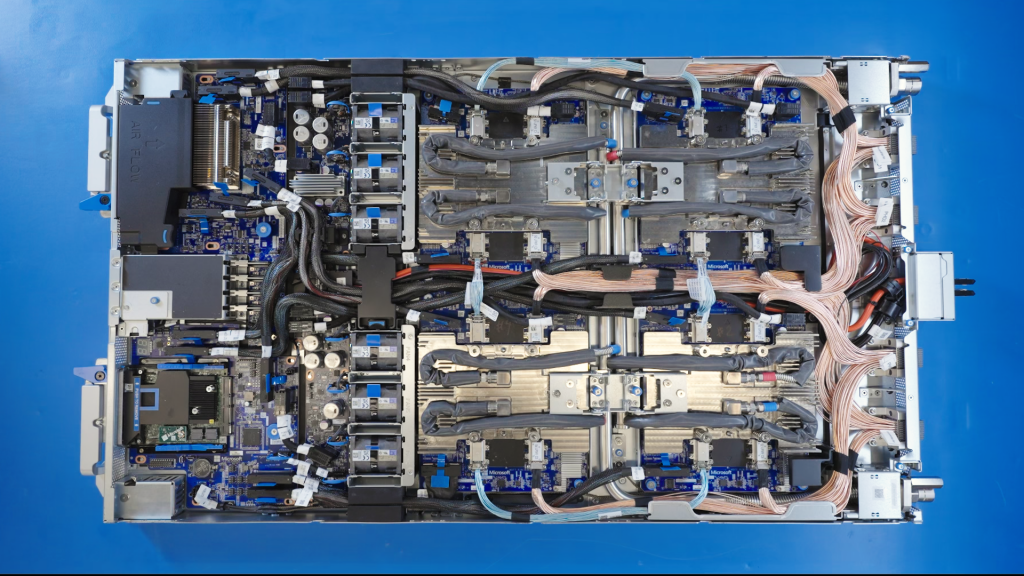

At the system level, Maia 200 introduces a two-tier scale-up architecture based on standard Ethernet rather than proprietary interconnects. Each accelerator exposes 2.8TB/s of bidirectional scale-up bandwidth and supports high-performance collective operations across clusters of up to 6,144 accelerators.

Within a single tray, four Maia accelerators are connected via direct, non-switched links to keep high-bandwidth communication local. The same transport protocol is used for intra-rack and inter-rack communication, enabling consistent performance characteristics as workloads scale across nodes, racks, and clusters. This unified approach is intended to reduce network complexity, minimise communication hops, and improve overall cost efficiency at scale.

Deployment and data centre integration

Maia 200 is currently deployed in Microsoft’s US Central data centre region, with additional regions planned. The accelerator integrates natively with Azure’s control plane, providing telemetry, diagnostics, security, and management at both chip and rack levels.

The platform also incorporates second-generation, closed-loop liquid cooling infrastructure to support high-density deployments within standard data centre power and thermal constraints. These features are designed to support production inference workloads with high availability and predictable performance.

Software stack and developer enablement

Microsoft is previewing a Maia software development kit to support model deployment and optimisation on the new accelerator. The SDK includes integration with PyTorch, a Triton compiler, optimised kernel libraries, and access to a low-level programming language (NPL) for fine-grained control.

In addition, the SDK provides simulation and cost modelling tools intended to help developers evaluate performance and efficiency trade-offs earlier in the development lifecycle. The software stack is designed to support model portability across heterogeneous accelerators while enabling Maia-specific optimisations where required.

Target workloads and use cases

Maia 200 is intended to support a range of inference workloads across Microsoft’s AI services, including large language models such as OpenAI’s GPT-5.2, as well as internal services such as Microsoft 365 Copilot. The accelerator is also being used for synthetic data generation and reinforcement learning workflows, where high-throughput inference is a critical component of data generation and model refinement pipelines.

Pre-silicon co-design and time-to-deployment

A key aspect of Maia 200’s development was extensive pre-silicon modelling of both compute and communication patterns associated with large-scale AI workloads. This co-design approach allowed Microsoft to optimise silicon, networking, and system software concurrently.

As a result, AI workloads were reportedly running on Maia 200 hardware within days of first packaged silicon availability, and the time from first silicon to data centre deployment was significantly reduced compared with previous infrastructure programs. This approach is intended to improve utilisation rates and accelerate time to production for new hardware generations.

Outlook

Maia 200 represents the first deployment in a multi-generational accelerator roadmap. As AI models continue to grow in scale and inference demand increases, Microsoft’s strategy emphasises vertically integrated design across silicon, software, networking, and data centre infrastructure.

Future Maia generations are expected to build on this foundation, with incremental improvements in performance, efficiency, and scalability for large-scale AI inference workloads.