Edge devices like security cameras, robots, industrial equipment, Client PCs, and even toys can now support AI/ML capabilities that provide users with new capabilities and experiences.

According to industry analyst firm ABI Research, the Edge AI chipset market has: “Experienced strong growth in the past and is expected to continue to grow to $71bn by 2024, with a CAGR of 31% between 2019 and 2024. Such strong growth is propelled by the migration of AI inference workloads to the edge, particularly in the smartphone, smart home, automotive, wearables, and robotics industries.”

However, making a client compute device “smart” adds new challenges to product design. AI/ML is an emerging technology, and many OEMs don’t have the in-house experience or time needed to design an AI/ML solution from scratch.

The algorithms used to train client compute devices are evolving at a rapid pace, so developers are also looking for AI/ML solutions that are field upgradable. But the most crucial question for many Edge AI/ML application developers is how to deliver the processing performance needed to power an AI/ML application in a device that runs on batteries.

To address these issues, application developers and OEMs need access to flexible hardware and software solutions that make those AI/ML-enabled experiences possible at low power. Since 2018, the Lattice sensAI solution stack has helped Lattice customers add AI/ML capabilities to new and existing product designs.

The company has announced that the latest version of the sensAI solution stack (v4.1) now includes a roadmap of user experience reference designs to bring AI/ML capabilities to client compute devices like laptops. The pandemic created a big uptick in the number of people using video conferencing applications to stay connected with work, friends, and family.

The reference designs included in the latest sensAI stack release leverage the vision and sound sensors in client devices to provide value-added user experiences around instant-on, presence detection, attention tracking, privacy, and video conferencing, while keeping power consumption low to maximize battery life.

Lattice sensAI solution stack v4.1 helps developers use sensors and AI/ML inferencing to provide new and improved user experiences for client compute devices.

OEMs can benefit in multiple ways by adding AI/ML support to their device designs with the Lattice sensAI stack, including:

- Up to a 28% increase in battery life in comparison to client devices using their CPUs to power AI applications.

- Support for in the field software updates to keep pace with evolving AI technologies.

- Scalability to run multiple use cases at low power by offloading AI data processing from the CPU.

- Broad support for popular sensor and SoC technologies.

Lattice will be adding more experiences to its Client Compute AI roadmap in future releases of the sensAI stack.

With support for its latest addition to the Lattice Nexus FPGA lineup, Lattice CertusPro-NX, the stack can also deliver the performance and accuracy gains required by the highly-accurate object and defect detection applications used in automated industrial systems.

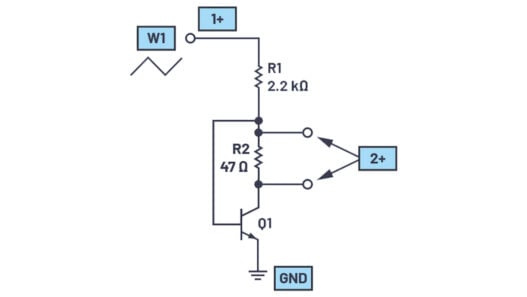

To facilitate the design of voice-and vision-based AI/ML applications for client devices, the stack supports a new hardware platform featuring an onboard image sensor, two I2S microphones, and expansion connectors for adding additional sensors.

As for software updates to the stack, they include an updated neural network compiler and support for Lattice sensAI Studio, a GUI-based tool with a library of AI models that can be configured and trained for popular use cases. sensAI Studio now supports AutoML features to enable creation of ML modules based on application and dataset targets. Several of the models based on the Mobilenet ML inferencing training platform are specifically optimized for running on CertusPro-NX FPGAs.

The stack is also compatible with other widely-used ML platforms, including the latest versions of Caffe, Keras, TensorFlow, and TensorFlow Lite.