Opening CES 2026 in Las Vegas, Jensen Huang, CEO & Founder, NVIDIA launched the company’s first extreme-codesigned AI platform – Rubin.

“Computing has been fundamentally reshaped as a result of accelerated computing, as a result of artificial intelligence,” Huang said. “What that means is some $10 trillion or so of the last decade of computing is now being modernised to this new way of doing computing.”

Named for Vera Florence Cooper Rubin – the American astronomer whose discoveries transformed humanity’s understanding of the universe – the Rubin platform features the NVIDIA Vera Rubin NVL72 rack-scale solution and the NVIDIA HGX Rubin NVL8 system.

Comprising six new chips designed to deliver one AI supercomputer, NVIDIA Rubin will help its users build, deploy, and secure the world’s largest and most advanced AI systems at the lowest cost to accelerate mainstream AI adoption.

It uses extreme codesign across the six chips – the NVIDIA Vera CPU, NVIDIA Rubin GPU, NVIDIA NVLink 6 Switch, NVIDIA ConnectX-9 SuperNIC, NVIDIA BlueField-4 DPU and NVIDIA Spectrum-6 Ethernet Switch – to lower training time and inference token costs.

“Rubin arrives at exactly the right moment, as AI computing demand for both training and inference is going through the roof,” said Huang. “With our annual cadence of delivering a new generation of AI supercomputers – and extreme codesign across six new chips – Rubin takes a giant leap toward the next frontier of AI.”

With Rubin, NVIDIA aims to “push AI to the next frontier”.

Sam Altman, CEO, OpenAI said: “Intelligence scales with compute. When we add more compute, models get more capable, solve harder problems and make a bigger impact for people. The NVIDIA Rubin platform helps us keep scaling this progress so advanced intelligence benefits everyone.”

Elon Musk, Founder & CEO, xAI said: “NVIDIA Rubin will be a rocket engine for AI. If you want to train and deploy frontier models at scale, this is the infrastructure you use – and Rubin will remind the world that NVIDIA is the gold standard.”

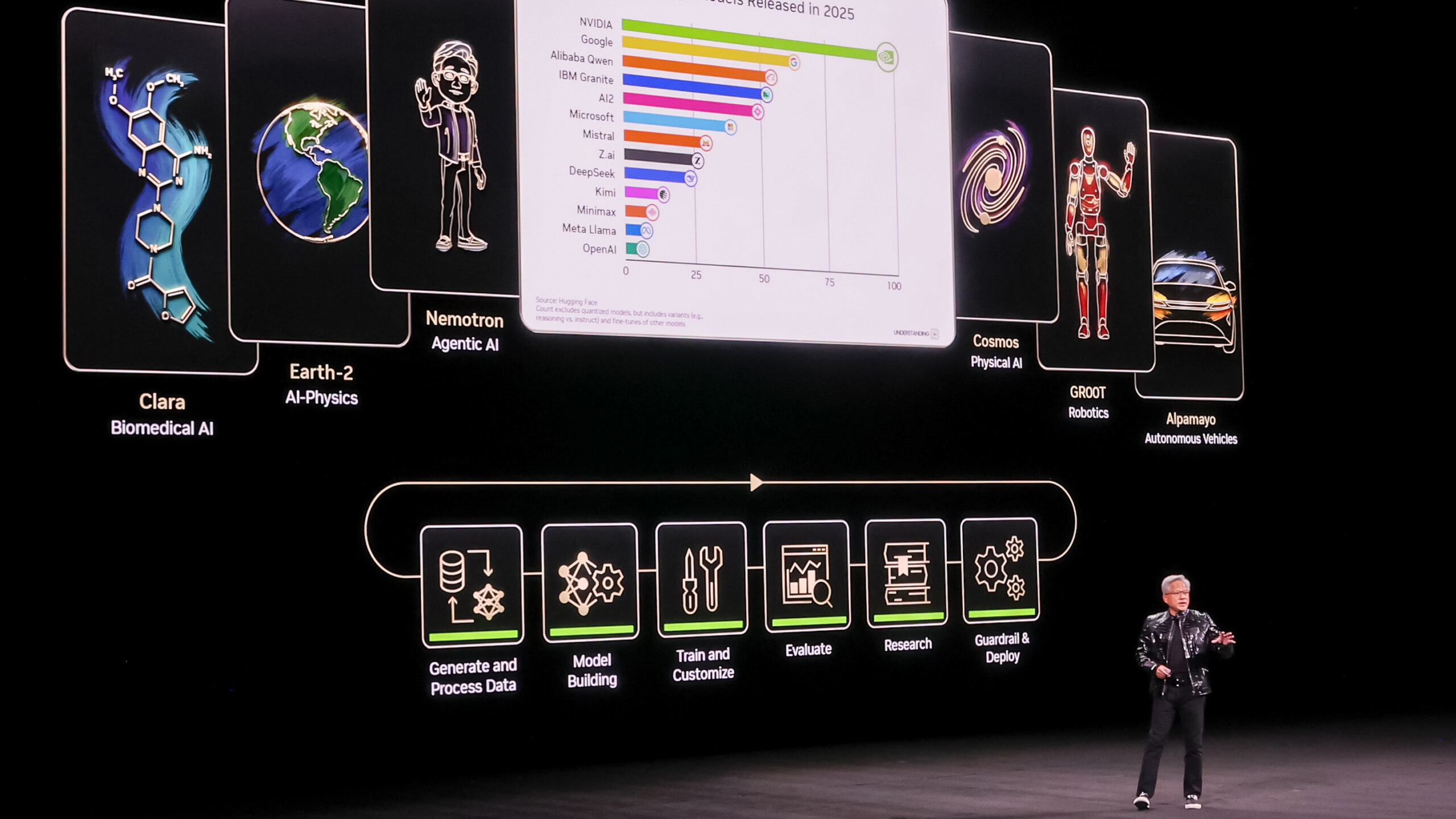

NVIDIA has built this platform on open models, trained on the company’s own supercomputers, so that “every company, every industry, every country, [can] be part of this AI revolution.”

The ChatGPT moment for robotics

NVIDIA also announced new frameworks and AI infrastructure for physical AI and unveiled robots from global partners.

The new technologies speed workflows across the entire robot development lifecycle to accelerate the next wave of robotics, including building generalist-specialist robots that can quickly learn many tasks.

Global industry leaders including Boston Dynamics, Caterpillar, Franka Robotics, Humanoid, LG Electronics, and NEURA Robotics are using the NVIDIA robotics stack to debut new AI-driven robots.

NVIDIA is building open models that allow developers to bypass resource-intensive pretraining and focus on creating the next generation of AI robots and autonomous machines. These new models, all available on Hugging Face, include:

- NVIDIA Cosmos Transfer 2.5 and NVIDIA Cosmos Predict 2.5 – open, fully customisable world models that enable physically based synthetic data generation and robot policy evaluation in simulation for physical AI

- NVIDIA Cosmos Reason 2, an open reasoning vision language model (VLM) that enables intelligent machines to see, understand, and act in the physical world like humans

- NVIDIA Isaac GR00T N1.6, an open reasoning vision language action (VLA) model, purpose-built for humanoid robots, that unlocks full body control and uses NVIDIA Cosmos Reason for better reasoning and contextual understanding

“The ChatGPT moment for robotics is here. Breakthroughs in physical AI – models that understand the real world, reason, and plan actions – are unlocking entirely new applications,” said Huang. “NVIDIA’s full stack of Jetson robotics processors, CUDA, Omniverse, and open physical AI models empowers our global ecosystem of partners to transform industries with AI-driven robotics.”

Autonomous driving

NVIDIA is also the first to release an open reasoning VLA model designed to tackle long-tail autonomous driving challenges.

The NVIDIA Alpamayo family introduces chain-of-thought, reasoning-based vision language action (VLA) models that bring humanlike thinking to AV decision-making. These systems can think through novel or rare scenarios step by step, improving driving capability and explainability – which is critical to scaling trust and safety in intelligent vehicles – and are underpinned by the NVIDIA Halos safety system.

Alpamayo integrates three foundational pillars – open models, simulation frameworks, and datasets – into a cohesive, open ecosystem that any automotive developer or research team can build upon.

Rather than running directly in-vehicle, Alpamayo models serve as large-scale teacher models that developers can fine-tune and distil into the backbones of their complete AV stacks.

Huang announced the first passenger car featuring Alpamayo built on NVIDIA DRIVE full-stack autonomous vehicle platform will be on the roads soon in the all‑new Mercedes-Benz CLA.

“As the automotive industry embraces physical AI, NVIDIA is the intelligence backbone that makes every vehicle programmable, updatable, and perpetually improving through data and software,” said Ali Kani, Vice President of Automotive at NVIDIA. “Starting with Mercedes-Benz and its incredible new CLA, we’re celebrating a stunning achievement in safety, design, engineering and AI-powered driving that will turn every car into a living, learning machine.”

You can watch the full keynote below:

Huang explained that NVIDIA builds entire systems now because it takes a full, optimised stack to deliver AI breakthroughs.

“Our job is to create the entire stack so that all of you can create incredible applications for the rest of the world,” he said.