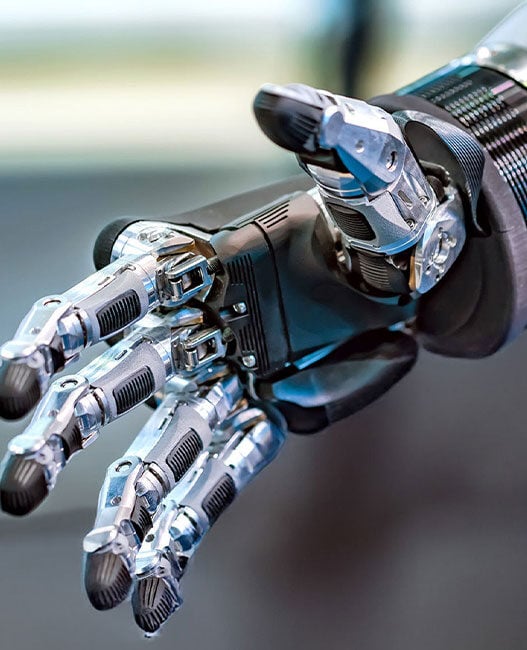

Automation is the backbone of the modern industrial facility, and robotics is its catalyst. Modern artificial intelligence (AI)-driven robotics capabilities are advancing rapidly, driving larger and more sophisticated industrial deployments. However, as automated systems expand both in scope and size throughout industrial footprints, the acts of collecting, aggregating, and analysing sensor data become increasingly difficult.

Each new sensor adds more signals, data, and demand to the system, as well as more risk. Bigger systems with more complicated designs and more data to process are inherently burdened with increased potential for error, lag, latency, and security breaches. And while AI and machine learning (ML) models can help streamline robot-driven operations, integrating them into these systems is a challenge in itself.

As modern industrial automation systems expand in size, become more autonomous, and increase overall connectivity, the amount of potential entry points for hackers to attack grows dramatically. To protect against an evolving threat landscape, developers will need to examine the underlying hardware that supports their progressively more distributed automated systems.

The need for sensor fusion

The reliable operation of today’s automated industrial facilities rests heavily on sensor fusion: the ability to combine and process data from various sensors, devices, and workflows, contextualising signals to enhance accuracy, visibility, and specificity. Sensor fusion helps to optimise and increase the value of analytics tools and the predictive insights they provide, ensuring minimal downtime while increasing overall throughput and efficiency.

Contemporary AI and robotics professionals recognise the critical role that sensor fusion plays in bringing advanced robotics systems to the Edge. It’s a key enabler of real-time response capabilities, which 84% of those in the field deem (somewhat or highly) critical to system performance. When combined with precision motor control, functional safety, and security measures, sensor fusion helps to satisfy many of the major challenges in designing automated robotics systems.

Unfortunately, significant challenges to deployment remain. Take the combination of camera and LiDAR sensors, for example: while 75.7% of surveyed leaders say they prefer this sensor fusion model, only 67.5% have successfully deployed camera-LiDAR systems. This gap reflects the barriers to adoption that have thus far hindered streamlined robotic automation implementations.

The challenges at hand

Regardless of the exact sensors and AI models involved, the sheer volume of components that engineers need to support advanced automated robotic applications presents a significant challenge. Three key barriers to adoption that engineers have not yet fully overcome include:

Integration

Industrial robotics systems are complex and need connectivity across many advanced sensors that perform a diverse range of tasks. Linking the various parts of these systems and ensuring their usefulness requires flexible I/O and high performance at the chip level, posing significant problems for many general-purpose components.

While today’s processors use advanced processing nodes to shrink transistors, boost performance, and reduce die size and cost, those nodes have more I/O limitations and cannot easily accommodate legacy connectivity requirements.

Digital twins and calibration

Many facilities rely on these systems to reduce human error through the automation of precise, mission-critical tasks, and any misalignment or disconnect compromises that capability. This necessitates that each robot’s internal parameters and physical movements are matched precisely with its digital model. Unfortunately, environmental and other factors can impact how accurately a robot operates, and calibration must be continuously monitored and maintained.

Cost and power

Both the upfront and ongoing costs associated with smart, AI-powered robotics builds have slowed widespread adoption. The specialised sensors necessary to support these systems are costly, while separate energy, compute, and training expenses present additional obstacles.

Autonomous robots are challenged by the need to balance incredibly high compute capacity with the need to optimise power and increase runtime.

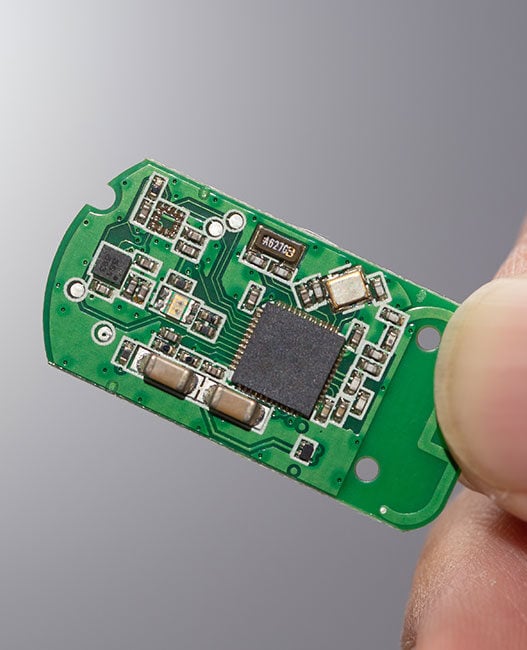

To enable widespread adoption of AI-supported robotics, designers will need to find ways to simplify and streamline sensor-based Edge architectures without sacrificing speed, compute power, or efficiency. This process begins at the foundation, with new approaches to building Edge devices that leverage specialised components like field-programmable gate arrays (FPGAs).

How FPGAs support sensor fusion

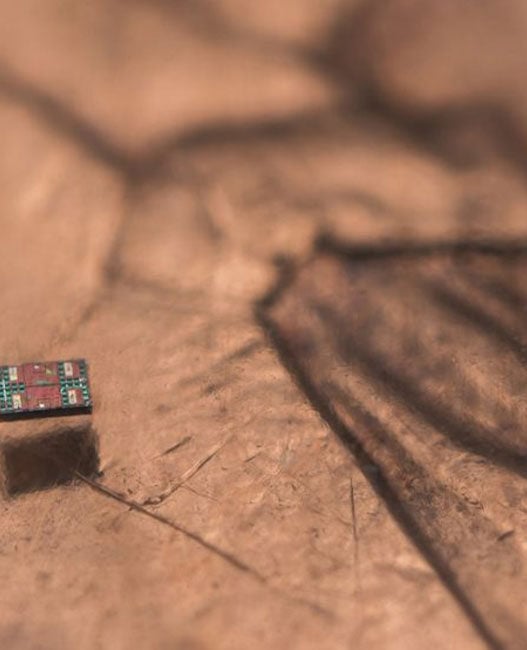

FPGAs are proving to be valuable tools in the design and deployment of optimised robotic solutions. They offer the low latency, synchronised, and deterministic performance needed for sensor fusion processing, all at power levels not achievable by typical processors. FPGAs also support critical functional safety, security, and flexibility requirements, and are offered in small, efficient packages. However, these features only scratch the surface of their potential for advancing AI-enabled robotic automation at scale.

FPGAs’ unique blend of capabilities provides an elegant solution to the key challenges of sensor fusion. At the heart of their utility is their parallel processing capacity, which allows them to execute multiple tasks concurrently. By handling signal processing, alignment, and fusion along with computer vision and Edge AI capabilities, these chips offload tasks from primary computing components in order to reduce latency and processing strain and extend operational capabilities. This significantly speeds up critical tasks to enhance robotic systems’ accuracy and decision-making, ultimately enabling more reliable, consistent, precise, and timely operations.

FPGAs also address the I/O-compute paradox described above, offering highly customisable I/O with flexible protocol support. This enables interoperability with a broad range of sensors and actuators supporting common standards such as Ethernet, SPI, LVDS, CAN, MIPI, JESD-204B, and GPIOs. By minimising latency, providing deterministic low power processing, and offloading sensor fusion, computer vision, and physical AI workloads, these chips help surmount common compute and power hurdles. Together, they help to improve overall performance and extend operational capabilities.

As the name suggests, these semiconductors aren’t just flexible during the design stage. FPGAs can be updated post-deployment, addressing an often-unspoken barrier to progress: future needs. Their reprogrammability furthers their potential to drive advances in robotic automation into its next era, enabling evolution and adaptation to suit emerging requirements while simultaneously extending the useful lifetime of equipment.

Today and tomorrow

As the demand for real-time data processing and decision-making grows, simplifying the integration and management of sensor data will be critical to successful robotic automation and system risk management.

FPGAs provide a foundation for these efforts, giving designers the flexibility they need to refine their approaches to sensor fusion while redefining what intelligent robotics can do for industrial operations, both now and in the future.

Paired with other advanced components, FPGAs will help usher in the next generation of robotics and automation deployments – and continue to offer flexible support as the field matures. They are compelling proof that, despite the challenges at hand, intelligent, automated, and real-time industrial robotic solutions are well within reach.

Visit Lattice Semiconductor at embedded world: Stand 4-528

This article originally appeared in the March’26 magazine issue of Electronic Specifier Design – see ES’s Magazine Archives for more featured publications.