Artificial intelligence is poised to transform medical imaging, promising faster diagnoses and greater accuracy. However, as health care organisations invest more in such technologies, they also face technical hurdles that extend well beyond clinical issues. From hardware incompatibilities to computational overhead, the obstacles are as much about electronics engineering as they are about algorithms. Companies looking to deploy AI imaging at scale should understand the nature of these challenges.

1. Solving the data and hardware compatibility problem

Every AI model is only as good as the data it trains on, and in medical imaging, that data arrives from a patchwork of hardware. For example, a GE HealthCare MRI scanner encodes images differently from a Siemens Healthineers unit. Digital Imaging and Communications in Medicine (DICOM) file structures diverge between vendors. A 2025 study found that DICOM format conversions produced structural changes that AI models detected with up to 99.5% accuracy, skewing diagnostic output even when those changes remained visually imperceptible.

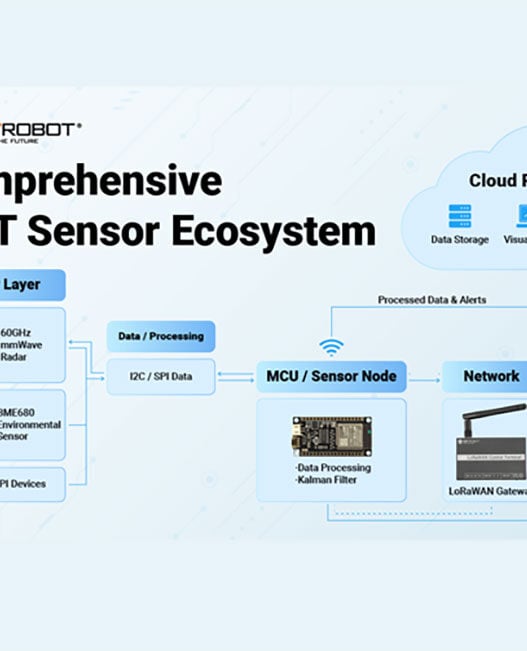

Preprocessing pipelines can standardise images before they reach the model, adjusting for differences in scanner output and resolution. Running these routines on Edge devices at the scanner itself keeps processing local and reduces transfer delays.

2. Integrating AI with legacy PACS and clinical networks

Many hospitals store and distribute medical images through Picture Archiving and Communication Systems (PACS). Because many of these legacy systems predate modern AI tooling, they often lack API support, and connecting AI inference engines can be difficult without extensive custom development.

A single CT scan can produce hundreds of high-resolution DICOM slices, and transferring these to a remote AI server over ageing hospital networks introduces latency and bandwidth bottlenecks. A 2024 Health Foundation survey found that 76% of NHS staff support the use of AI in patient care. This signals a clinical demand that outdated infrastructure struggles to match.

Middleware platforms serve as a bridge, translating data formats and managing communication protocols between legacy PACS and AI engines, without requiring a full system replacement.

Fibre optic links and 5G connectivity on hospital campuses help move large imaging files faster, while encryption and role-based access controls keep patient data secure during transit.

3. Building generalisable and reliable AI models

An AI model that works well at one hospital can fall flat at another. The usual culprit is overfitting – the model learns quirks specific to one facility’s scanners and patient mix rather than broader diagnostic patterns. For example, a model trained only on 1.5T MRI scans may misread images from a 3T machine because the signal intensity and contrast look different.

Federated learning offers a way around this. Instead of pooling sensitive patient data in a single location, each hospital trains the model on its own records and shares only the updated parameters. A 2025 study tested a federated framework across four clinical imaging tasks and found it balanced accuracy and fairness better than earlier methods.

4. Managing the high computational and energy costs

AI adoption in health care is accelerating. A 2026 industry survey found that 70% of health care providers are inclined to invest in diagnostic imaging, remote monitoring technologies and clinical decision support over the next one to two years. That financial commitment centres computational cost.

Training deep learning models for imaging eats through GPU hours, storage, and energy for data centre cooling. Studies even show that the environmental and economic costs of running AI grow right alongside the diagnostic gains. Even after training ends, inference alone can use more energy over a model’s lifetime than the original training run did.

Cloud-based AI-as-a-service platforms reduce up-front hardware investment by offloading computation to scalable remote infrastructure. Specialised low-power AI accelerator chips offer a compelling alternative for on-site processing. Researchers at Johns Hopkins University demonstrated a ternary-quantised vision transformer that reduced model size by 43 times and boosted energy efficiency by up to 41 times on edge hardware.

5. Ensuring model explainability through technical transparency

Many AI models operate as black boxes, producing outputs without revealing their internal logic. For instance, when an AI flags a potential tumour on a chest X-ray, the radiologist has no way to verify the reasoning. That opacity can also create a regulatory problem. Governance bodies require auditable processes, and a model that cannot explain its decisions is difficult to validate or debug when errors happen.

Saliency maps, which generate visual heatmaps showing which image regions influenced the model’s decision, are becoming a standard approach. A multicentre study found that Shapley-based saliency maps significantly improved radiologists’ diagnostic sensitivity and specificity when reviewing vertebral fracture classifications, providing clinicians with a concrete reference point.

Well-designed AI systems also produce confidence scores and automatically flag ambiguous cases for human review. This human-in-the-loop architecture keeps the clinician at the centre of diagnosis while giving the AI a clearly defined role.

Engineering solutions will drive AI adoption in imaging

Successfully deploying artificial intelligence in medical imaging depends as much on engineering innovation as it does on algorithmic performance. By tackling hardware compatibility, legacy system integration, and computational overhead at the infrastructure level, the electronics industry can build the trusted foundation for scalable diagnostic AI.