Artificial intelligence (AI) and machine learning (ML) are transforming embedded safety-critical systems across industries like automotive, healthcare, and defence. They power evolutionary technologies that enable autonomous and efficient operations of embedded systems.

However, integrating AI/ML into embedded safety-critical systems presents unique challenges:

- High-stakes failure risks

- Stringent compliance requirements

- Unpredictable model behaviour

Imagine an autonomous car making split-second braking decisions or a pacemaker detecting life-threatening arrhythmias. Failure isn’t an option for these AI-powered embedded systems.

Why AI in safety-critical systems requires special testing

Embedded systems operate under strict constraints of limited processing power, memory, and energy. At the same time, they often function in harsh environments like extreme temperatures and vibrations.

AI models, especially deep learning networks, demand significant computational resources, making them difficult to deploy efficiently. The primary challenges development engineers face and why AI in safety-critical systems requires special testing include:

- Resource limitations. AI models consume excessive power and memory, conflicting with embedded devices’ constraints

- Determinism. Safety-critical applications, like autonomous braking, require predictable, real-time responses. Unfortunately, AI models can behave unpredictably

- Certification and compliance. Regulatory standards, such as ISO 26262 and IEC 62304, demand transparency. But AI models often act as black boxes

- Security risks. Adversarial attacks can manipulate AI models, leading to dangerous failures like fooling a medical device into incorrect dosing

To overcome these hurdles, engineers employ optimisation techniques, specialised hardware, and rigorous testing methodologies.

Strategies for reliable & safe AI/ML deployment

- Model optimisation: pruning & quantisation

Since embedded systems can’t support massive AI models, engineers compress them without sacrificing accuracy.

- Pruning removes redundant neural connections. For example, NASA pruned 40% of its Mars rover’s terrain-classification model, reducing processing time by 30% without compromising accuracy

- Quantisation reduces numerical precision to cut memory usage by 75%. For instance, converting 32-bit values to 8-bit integers. Fitbit used this to extend battery life in health trackers while maintaining performance

- Ensuring determinism with frozen models

Safety-critical systems, like vehicle lane assist, insulin pumps, and aircraft flight control, require consistent behaviour. AI models, however, can drift or behave unpredictably with different inputs.

The solution? Freezing the model. This means locking weights post-training to ensure the AI behaves exactly as tested. Tesla, for instance, uses frozen neural networks in Autopilot, updating them only after extensive validation of the next revision.

- Explainable AI (XAI) for compliance

Regulators demand transparency in AI decision-making. Explainable AI (XAI) tools like LIME and SHAP help:

- Visualise how models make decisions

- Identify biases or vulnerabilities

- Meet certification requirements like ISO 26262

- Adversarial robustness & security

AI models in embedded systems face cyber threats. For example, manipulated sensor data causing misclassification. Mitigation strategies include:

- Adversarial training. Exposing models to malicious inputs during development

- Input sanitisation. Filtering out suspicious data

- Redundancy and runtime monitoring. Cross-checking AI outputs with rule-based fallbacks

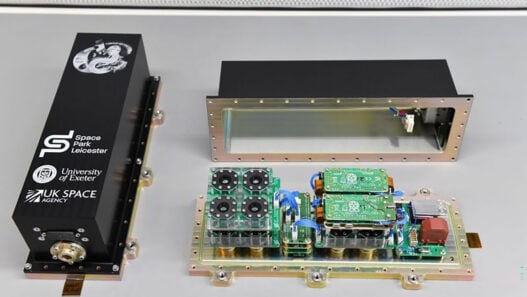

The role of specialised hardware

General-purpose CPUs struggle with AI workloads, leading to innovations like:

- Neural processing units (NPUs). Optimised for AI tasks like Qualcomm’s Snapdragon NPUs enable real-time AI photography in smartphones

- Tensor processing units (TPUs). Accelerate deep learning inference in embedded devices

These advancements allow AI to run efficiently even in power-constrained environments.

Traditional verification for AI-enabled systems

Even with AI, traditional verification remains critical:

| Method | Role in AI Systems |

| Static Analysis | Inspects the model’s structure for design flaws. |

| Unit Testing | Validates non-AI components, such as sensor interfaces, while AI models undergo data-driven validation. |

| Code Coverage | Ensures exhaustive testing like MC/DC for ISO 26262 compliance. |

| Traceability | Maps AI behaviour to system requirements, crucial for audits. |

| Hybrid approaches – combining classical testing with AI-specific methods – are essential for certification. | |

Summary

Although AI/ML is transforming embedded systems, safety and compliance remain the absolute top priority. By balancing innovation with rigorous testing, model optimisation, and regulatory alignment, teams can deploy AI-driven embedded systems that are safe and secure.

For more details, see the whitepaper ‘How to Ensure Safety in AI/ML-Driven Embedded System’.

This article originally appeared in the February’26 magazine issue of Electronic Specifier Design – see ES’s Magazine Archives for more featured publications

By Ricardo Camacho, Director of Safety & Security Compliance, Parasoft

This article originally appeared in the February’26 magazine issue of Electronic Specifier Design – see ES’s Magazine Archives for more featured publications