For years, facility digitisation has relied on a single, expensive robot slowly traversing every corridor of a warehouse, laboratory, or industrial plant. While effective, this approach is linear, which clashes with the fact that facilities are becoming larger and the demand for up-to-date digital twins has intensified.

It means that the limitations of single-robot mapping, such as time, scalability, and robustness, turn into critical bottlenecks.

In this first part of a two-part blog series, you’ll discover why multi-robot autonomous mapping is important, an overview of modern, scalable systems and their key technologies involved, as well as how multiple robots can be autonomously mapped. You’ll also find access to the complete source code and implementation reference to help you get started.

Why multi-robot mapping matters now

The shift toward multi-robot mapping is not merely a technological upgrade; it is a direct response to operational and economic pressures in large-scale environments. Using multiple robots instead of a single robot delivers significant and immediate business advantages.

Faster mapping and coverage

Time is the most expensive resource in facility management. A single robot exploring a 100,000 sq. ft. warehouse might take days. By deploying multiple rovers to explore different areas in parallel, total mapping time is reduced dramatically. This acceleration enables faster project completion, quicker turnaround for facility updates, and minimal disruption to daily operations.

Scalable for large and complex facilities

The linear approach of single-robot mapping does not scale. As you add more square footage, the time required grows proportionally. Multi-robot systems are inherently scalable, making them ideally suited for:

- Large laboratories and research campuses

- Multi-floor office buildings

- Vast warehouses and distribution centres

- Complex industrial plants

Effective navigation during mapping in dynamic spaces

A mapping mission in a real facility is never straightforward. People walk through corridors, forklifts move pallets, and doors open and close. A single robot encountering a dynamic obstacle might have to pause or re-plan, further extending mission time. By integrating Nav2, each robot in a fleet benefit from built-in collision avoidance and dynamic obstacle handling.

This enables reliable mapping operations even in changing environments, as robots safely navigate around moving objects without failing their mission.

Unified global map for digital twins

The ultimate goal of mapping is often to create a single source of truth – a digital twin of the facility. Multi-robot mapping, when done correctly, produces a single, consistent global map. This unified output is essential for facility digitization, asset inspection workflows, and powering enterprise applications that require an accurate and complete representation of the physical space.

What a modern multi-robot mapping system requires

Building a system that delivers on this business value requires more than just launching multiple SLAM algorithms at once. It demands a coordinated, intelligent architecture. Our solution is built around three core components that work in harmony.

Overview of a multi-robot mapping system

The multi-rover mapping system is designed to treat the fleet as a single, cohesive mapping entity. Each rover runs its own SLAM and Nav2 navigation stack under a dedicated namespace, ensuring independence at the robot level. However, a centralised control layer coordinates exploration and builds a shared global map, ensuring that individual efforts contribute to a unified outcome.

Key technologies

The foundation of this architecture is built on mature, production-grade open-source tools and custom logic:

- ROS 2 Humble: Provides the communication layer and modular architecture.

- Nav2 Navigation Stack: Handles safe navigation, path planning, and collision avoidance for each robot.

- SLAM Toolbox: Configured for multi-robot operation to generate local maps.

- Gazebo Simulator: Used for development, validation, and stress-testing the system.

- Custom ROS 2 Python Nodes: Implement the intelligent exploration logic and centralised coordination.

- Frontier-Based Autonomous Exploration: The strategy that guides robots to unexplored areas efficiently.

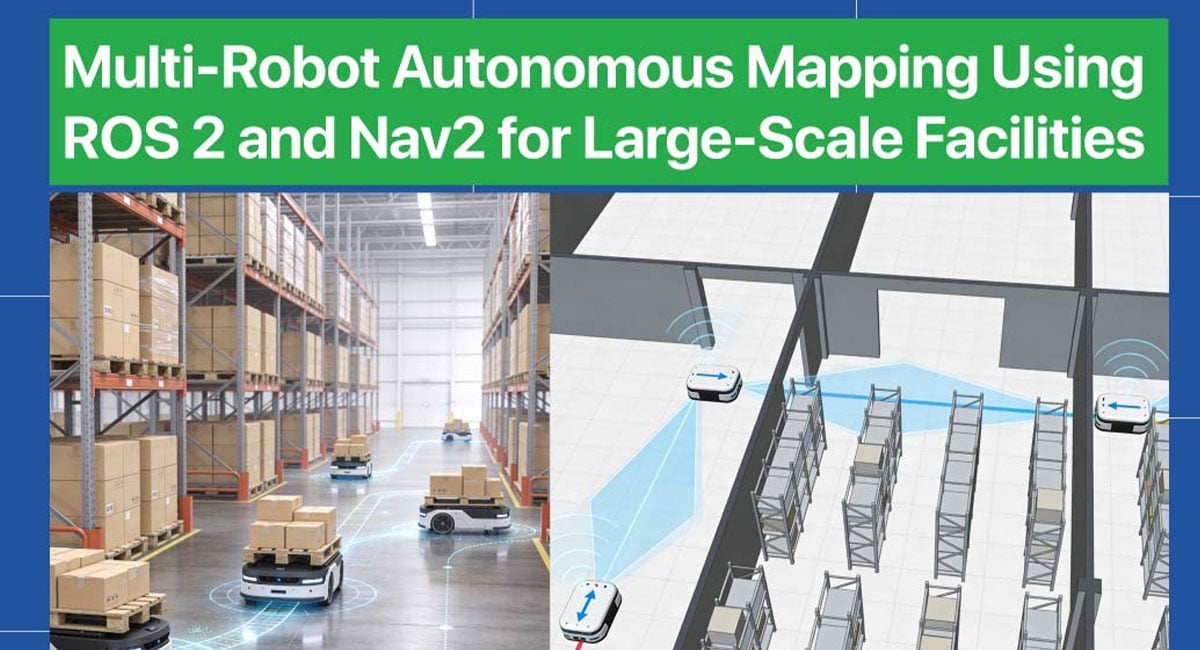

Multi-Robot Autonomous Mapping in Action

The images below showcase the system’s capability to coordinate multiple robots autonomously, highlighting the parallel exploration that makes multi-robot mapping so powerful. The system is designed to scale based on real-time operational needs, allowing additional robots to be deployed as facility size, complexity, or mapping speed requirements increase.

Autonomous mapping of 4 robots

Four robots coordinate seamlessly to explore a medium-sized facility, with the centralised controller assigning distinct frontier goals to maximise coverage. The custom map merging node publishes a unified global map in real time as each robot expands the explored area from different entry points.

Autonomous mapping of 6 robots

Scaling up to six robots in a larger environment demonstrates the system’s robust coordination, with all rovers exploring simultaneously without overlap or redundancy. The unified global map continues to update seamlessly, proving the architecture’s readiness for real-world facilities of any size.

Interested in implementing this multi-robot mapping framework in your own environment?

Access the complete source code and implementation reference to get started.

Now that you understand the ‘why’ and the ‘what’ of multi-robot mapping, the next question is how do you actually build it? Also, how do you solve the inherent challenges of robots interfering with each other, generating conflicting map data, or navigating shared spaces safely?

In Part 2 of this blog series, you’ll learn about custom multi-robot map merging, and the challenges involved like namespace management and dynamic obstacle handling. You’ll also find out to create a production-ready system that smoothly transitions from simulation to real-world robots.

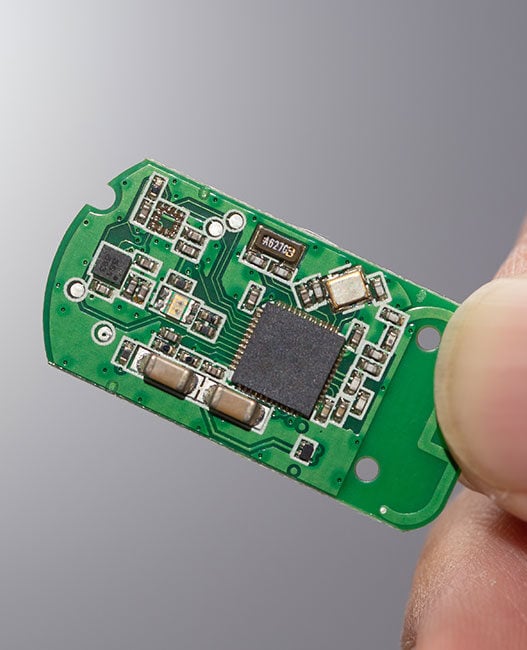

e-con systems provides reliable cameras for multi-robot autonomous mapping

Since 2003, e-con Systems has been designing, developing, and manufacturing camera solutions, including OEM cameras and edge AI compute platforms. We offer camera modules with high-resolution imaging, global shutter support, HDR, and multi-camera synchronisation. These modules can be easily integrated with NVIDIA Jetson platforms for deployment.

Explore our eRCP – Robotic Computing Platform (RCP), powered by the Ambarella CV72S AI Vision Processor and Find the right camera for your application with e-con Systems’ Camera Selector.